[root@node1 ~]# ls

anaconda-ks.cfg calico.tar.gz node-exporter.tar.gz prometheus-cfg.yaml

busybox-1-28.tar.gz grafana_8.4.5.tar.gz prometheus-2-2-1.tar.gz

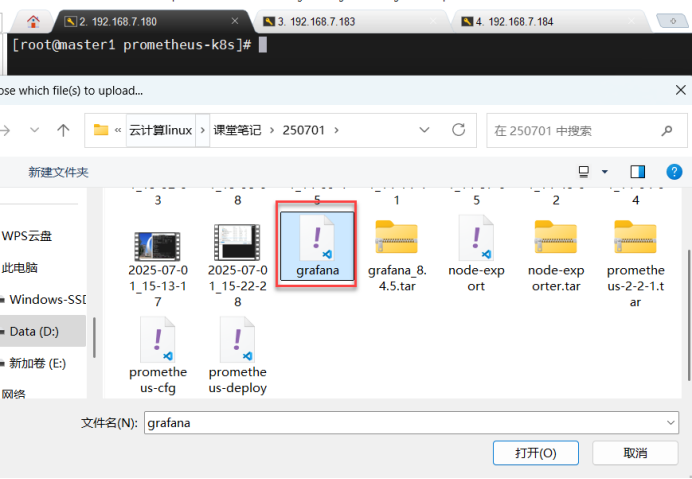

[root@node1 ~]# ctr -n k8s.io images import grafana_8.4.5.tar.gz node1\node2导入grafana的镜像文件

unpacking docker.io/grafana/grafana:8.4.5 (sha256:b862eb2a74c35f1dedea901ec93f8303f8ea6f1125b5005ff719912ae31267b8)…done

[root@node1 ~]#

[root@node2 ~]# ctr -n k8s.io images import grafana_8.4.5.tar.gz

unpacking docker.io/grafana/grafana:8.4.5 (sha256:b862eb2a74c35f1dedea901ec93f8303f8ea6f1125b5005ff719912ae31267b8)...done

[root@node2 ~]#

[root@master1 prometheus-k8s]# vim grafana.yaml 编辑grafana配置文件

17 spec:

18 nodeName: node1 添加节点名称为node1和node2

19 nodeName: node2

apiVersion: apps/v1

kind: Deployment

metadata:

name: monitoring-grafana

namespace: kube-system

spec:

replicas: 1

selector:

matchLabels:

task: monitoring

k8s-app: grafana

template:

metadata:

labels:

task: monitoring

k8s-app: grafana

spec:

nodeName: xianchaonode1

containers:

- name: grafana

image: grafana/grafana:8.4.5

imagePullPolicy: IfNotPresent

ports:

- containerPort: 3000

protocol: TCP

volumeMounts:

- mountPath: /etc/ssl/certs

name: ca-certificates

readOnly: true

- mountPath: /var

name: grafana-storage

- mountPath: /var/lib/grafana/

name: lib

env:

- name: INFLUXDB_HOST

value: monitoring-influxdb

- name: GF_SERVER_HTTP_PORT

value: "3000"

# The following env variables are required to make Grafana accessible via

# the kubernetes api-server proxy. On production clusters, we recommend

# removing these env variables, setup auth for grafana, and expose the grafana

# service using a LoadBalancer or a public IP.

- name: GF_AUTH_BASIC_ENABLED

value: "false"

- name: GF_AUTH_ANONYMOUS_ENABLED

value: "true"

- name: GF_AUTH_ANONYMOUS_ORG_ROLE

value: Admin

- name: GF_SERVER_ROOT_URL

# If you're only using the API Server proxy, set this value instead:

# value: /api/v1/namespaces/kube-system/services/monitoring-grafana/proxy

value: /

volumes:

- name: ca-certificates

hostPath:

path: /etc/ssl/certs

- name: grafana-storage

emptyDir: {}

- name: lib

hostPath:

path: /var/lib/grafana/

type: DirectoryOrCreate

---

apiVersion: v1

kind: Service

metadata:

labels:

# For use as a Cluster add-on (https://github.com/kubernetes/kubernetes/tree/master/cluster/addons)

# If you are NOT using this as an addon, you should comment out this line.

kubernetes.io/cluster-service: 'true'

kubernetes.io/name: monitoring-grafana

name: monitoring-grafana

namespace: kube-system

spec:

# In a production setup, we recommend accessing Grafana through an external Loadbalancer

# or through a public IP.

# type: LoadBalancer

# You could also use NodePort to expose the service at a randomly-generated port

# type: NodePort

ports:

- port: 80

targetPort: 3000

selector:

k8s-app: grafana

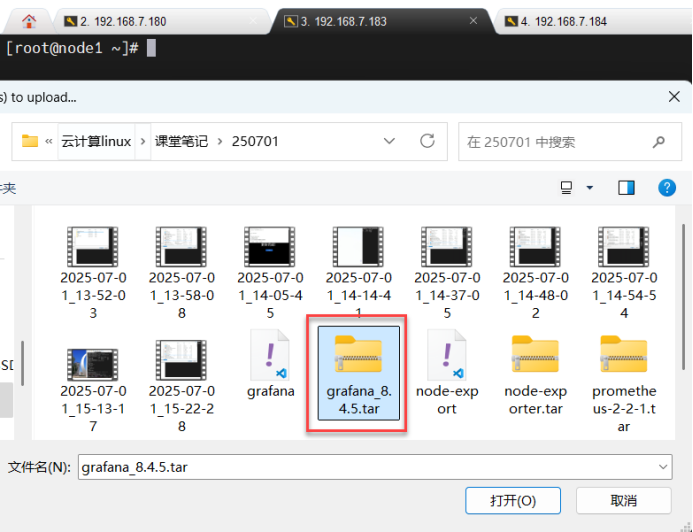

type: NodePort[root@node1 ~]# mkdir -p /var/lib/grafana 在node1、node2节点创建grafana的目录

[root@node1 ~]#

[root@node1 ~]# chmod 777 /var/lib/grafana 给grafana添加777权限

[root@node1 ~]#[root@node2 ~]# mkdir -p /var/lib/grafana

[root@node2 ~]#

[root@node2 ~]#

[root@node2 ~]# chmod 777 /var/lib/grafana

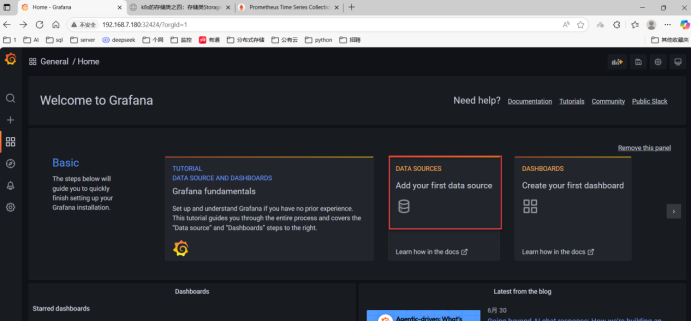

[root@node2 ~]#[root@master1 prometheus-k8s]# kubectl apply -f grafana.yaml 应用grafana的配置文件

deployment.apps/monitoring-grafana created

service/monitoring-grafana created

[root@master1 prometheus-k8s]# kubectl get pods -n kube-system | grep monitor 查看grafana的pod信息

monitoring-grafana-5b6798596c-lw25h 1/1 Running 0 22s

[root@master1 prometheus-k8s]#

[root@master1 prometheus-k8s]# kubectl get svc -n kube-system | grep grafana

monitoring-grafana NodePort 10.107.63.241 <none> 80:32424/TCP 4m37s

[root@master1 prometheus-k8s]#

一、实验环境:

三台机器,server 180(master)、server 183、server 184(node1、node2)

[root@master1 ~]# kubectl create ns monitor-sa 创建监控命名空间monitor-sa

namespace/monitor-sa created

[root@master1 \node1\node2~]# ctr -n k8s.io images import node-exporter.tar.gz #master1 \node1\node2将容器镜像导入到 Kubernetes 使用的容器运行时环境中

unpacking docker.io/prom/node-exporter:v0.965eabe03865ab1595705f4f847009)...done

[root@master1 ~]#[root@master1 ~]# mkdir prometheus-k8s #master1创建普罗米修斯目录

[root@master1 ~]#

[root@master1 prometheus-k8s]# cd

[root@master1 ~]# ls

anaconda-ks.cfg calico.yaml node_exporter-1.5.0.linux-amd64.tar.gz node-export.yaml

calico.tar.gz kubeadm.yaml node-exporter.tar.gz prometheus-k8s

[root@master1 ~]# cd prometheus-k8s/

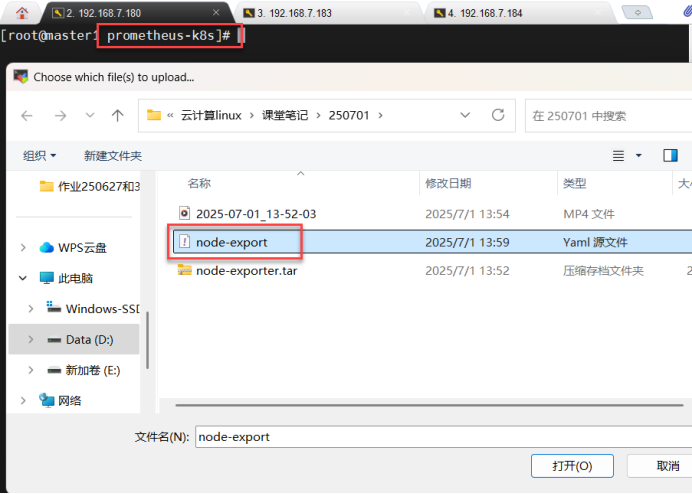

[root@master1 prometheus-k8s]# cp /root/node-export.yaml . 上传节点的配置文件到prometheus-k8s目录下

[root@master1 prometheus-k8s]# ls

node-export.yaml

[root@master1 prometheus-k8s]# kubectl apply -f node-export.yaml 应用节点的配置文件

daemonset.apps/node-exporter created

[root@master1 prometheus-k8s]# kubectl get pods -n monitor-sa 查看监控命名空间的pod信息

NAME READY STATUS RESTARTS AGE

node-exporter-g49bj 1/1 Running 0 22s

node-exporter-hffgm 1/1 Running 0 22s

node-exporter-xflj5 1/1 Running 0 22s

[root@master1 prometheus-k8s]#

[root@master1 prometheus-k8s]# kubectl get pods -n monitor-sa -o wide 查看监控命名空间的pod详细信息

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

node-exporter-g49bj 1/1 Running 0 44s 192.168.7.180 master1 <none> <none>

node-exporter-hffgm 1/1 Running 0 44s 192.168.7.183 node1 <none> <none>

node-exporter-xflj5 1/1 Running 0 44s 192.168.7.184 node2 <none> <none>

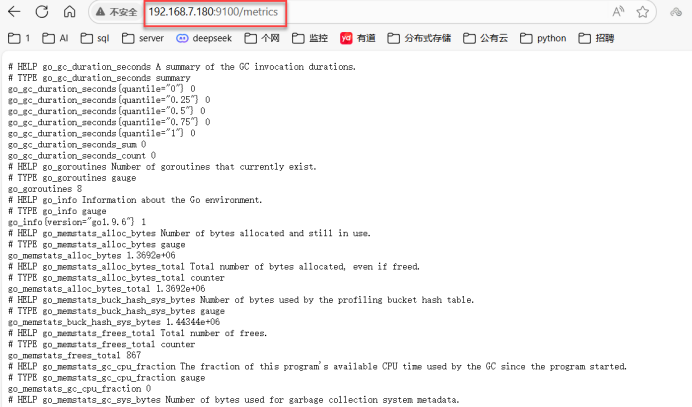

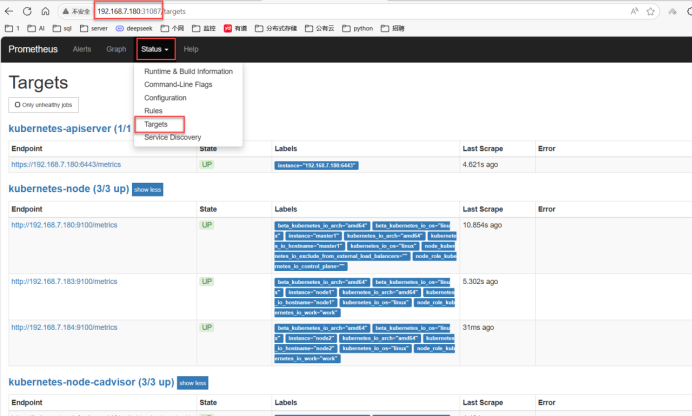

[root@master1 prometheus-k8s]#[root@master1 prometheus-k8s]# netstat -tunlp | grep :9100 查看node节点端口号

tcp6 0 0 :::9100 :::* LISTEN 25942/node_exporter 如果有25942 代表node节点起来了

[root@master1 prometheus-k8s]# curl http://192.168.7.180:9100/metircs 字符界面访问node节点的度量值

<html>

<head><title>Node Exporter</title></head>

<body>

<h1>Node Exporter</h1>

<p><a href="/metrics">Metrics</a></p>

</body>

</html>[root@master1 prometheus-k8s]#

[root@master1 prometheus-k8s]# kubectl create serviceaccount monitor -n monitor-sa 在监控空间创建sa(服务)的账号

serviceaccount/monitor created

[root@master1 prometheus-k8s]# kubectl create clusterrolebinding monitor-clusterrolebindi ng -n monitor-sa --clusterrole=cluster-admin --serviceaccount=monitor-sa:monitor

clusterrolebinding.rbac.authorization.k8s.io/monitor-clusterrolebinding created 将监控命名空间的sa账号通过clusterrolebinding绑定到集群角色中

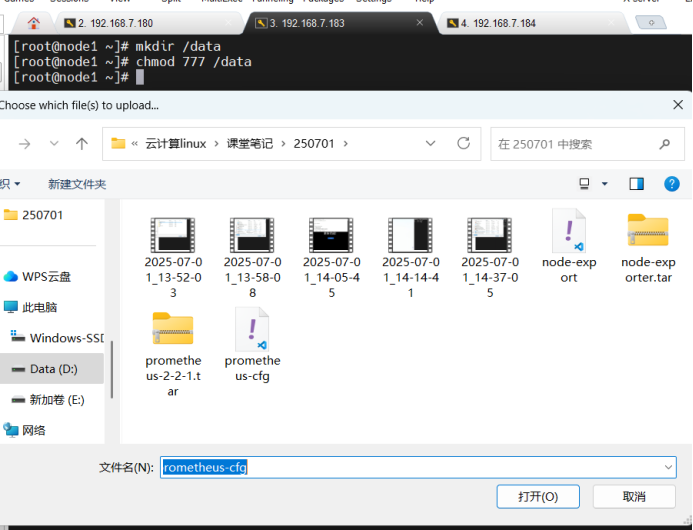

[root@master1 prometheus-k8s]#[root@node1 ~]# mkdir /data 在Node1\node2节点创建数据存储目录/data

[root@node1 ~]# chmod 777 /data 将data目录添加777权限

[root@node1 ~]#[root@node2 ~]# mkdir /data

[root@node2 ~]# chmod 777 /data

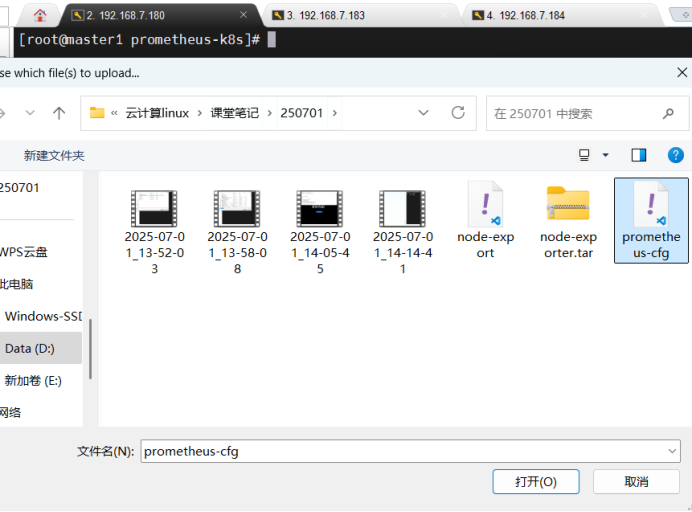

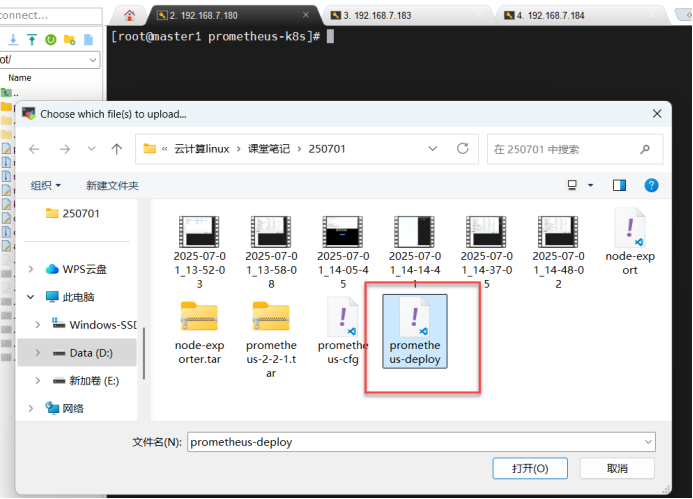

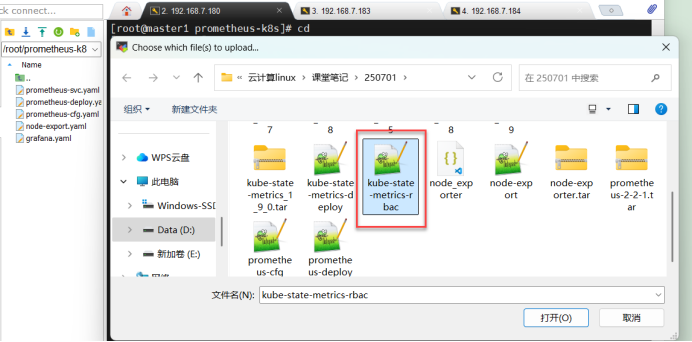

[root@node2 ~]#master节点上传Prometheus-cfgl普罗米修斯存储卷(主要用来存放配置文件)的配置文件

[root@master1 /]# cd

[root@master1 ~]# cd prometheus-k8s/

[root@master1 prometheus-k8s]# cp /root/prometheus-cfg.yaml .

[root@master1 prometheus-k8s]# ls

node-export.yaml prometheus-cfg.yaml

[root@master1 prometheus-k8s]#

[root@master1 prometheus-k8s]# kubectl apply -f prometheus-cfg.yaml 应用普罗米修斯存储卷的配置文件(主要用来存放配置文件)

configmap/prometheus-config created

[root@master1 prometheus-k8s]# kubectl get cm -n monitor-sa 查看监控命名空间,用来获取监控命名空间下的ConfigMap资源

NAME DATA AGE

kube-root-ca.crt 1 53m

prometheus-config 1 20s

[root@master1 prometheus-k8s]#Node1\node2上传prometheus-2.2.1.tar(普罗米修斯的镜像文件)

[root@node1 ~]# ls

anaconda-ks.cfg calico.tar.gz prometheus-2-2-1.tar.gz

busybox-1-28.tar.gz node-exporter.tar.gz prometheus-cfg.yaml

[root@node1 ~]# ctr -n k8s.io images import prometheus-2-2-1.tar.gz node1\node2节点导入普罗米修斯的镜像文件

unpacking docker.io/prom/prometheus:v2.2.1 (sha256:9b21b0369c9816ebd0bb41237def3de7e28c36cdc7a3e35e877c6475ef982d4a)...done

[root@node1 ~]#

[root@node1 ~]#[root@node2 ~]# ctr -n k8s.io images import prometheus-2-2-1.tar.gz

unpacking docker.io/prom/prometheus:v2.2.1 (sha256:9b21b0369c9816ebd0bb41237def3de7e28c36cdc7a3e35e877c6475ef982d4a)...done

[root@node2 ~]#

[root@master1 ~]# ls

anaconda-ks.cfg node_exporter-1.5.0.linux-amd64.tar.gz prometheus-deploy.yaml

calico.tar.gz node-exporter.tar.gz prometheus-k8s

calico.yaml node-export.yaml

kubeadm.yaml prometheus-cfg.yaml

[root@master1 ~]# cd prometheus-k8s/

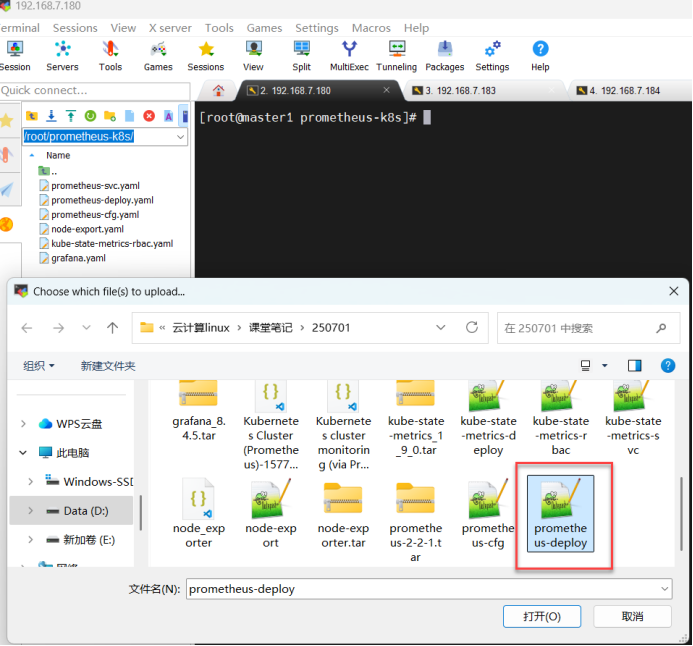

[root@master1 prometheus-k8s]# cp /root/prometheus-deploy.yaml . 将Prometheus的配置部署文件放到prometheus-k8s目录下

[root@master1 prometheus-k8s]#

[root@master1 prometheus-k8s]# vim prometheus-deploy.yaml 编辑普罗米修斯无状态的配置文件25 spec:

26 nodeName: node1 添加node1\node2节点信息(有多少节点添加多少个节点名称,需注意对齐)

27 nodeName: node2[root@master1 prometheus-k8s]# kubectl apply -f prometheus-deploy.yaml 应用普罗米修斯无状态的配置文件

deployment.apps/prometheus-server created

[root@master1 prometheus-k8s]# kubectl get pods -n monitor-sa 查看监控命名空间的pod信息

NAME READY STATUS RESTARTS AGE

node-exporter-g49bj 1/1 Running 0 56m

node-exporter-hffgm 1/1 Running 0 56m

node-exporter-xflj5 1/1 Running 0 56m

prometheus-server-6fc5b6f457-sp48r 看这个 1/1 Running 0 16s

[root@master1 prometheus-k8s]#

[root@master1 prometheus-k8s]# vim prometheus-svc.yaml 创建普罗米修斯服务的配置文件

apiVersion: v1 api版本为1

kind: Service 类型:服务

metadata: 元数据

name: prometheus 名称为:普罗米修斯

namespace: monitor-sa 命名空间:监控monitor-sa

labels: 卷标的名称为:普罗米修斯

app: prometheus

spec:

type: NodePort 类型为:nodeport

ports:

- port: 9090 源端口:9090

targetPort: 9090 目标端口:9090

protocol: TCP 协议:tcp协议

selector: 调度器

app: prometheus 使用:普罗米修斯

component: server 组成部分为:服务器

~

[root@master1 prometheus-k8s]#[root@master1 prometheus-k8s]# kubectl apply -f prometheus-svc.yaml 应用普罗米修斯svc服务的配置文件

service/prometheus created

[root@master1 prometheus-k8s]# kubectl get svc -n monitor-sa 监控命名空间的svc服务

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

prometheus NodePort 10.110.208.180 <none> 9090:31087/TCP 15s

[root@master1 prometheus-k8s]#[root@master1 prometheus-k8s]# kubectl get pods -n kube-system -o wide

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

calico-kube-controllers-7dc5458bc6-5jztl 1/1 Running 2 (89m ago) 11d 10.244.137.72 master1 <none> <none>

calico-node-clzfg 1/1 Running 2 (89m ago) 11d 192.168.7.183 node1 <none> <none>

calico-node-kbqjt 1/1 Running 2 (89m ago) 11d 192.168.7.180 master1 <none> <none>

calico-node-ng6b2 1/1 Running 2 (89m ago) 11d 192.168.7.184 node2 <none> <none>

coredns-7c445c467-kff9r 1/1 Running 2 (89m ago) 11d 10.244.137.71 master1 <none> <none>

coredns-7c445c467-mmcg7 1/1 Running 2 (89m ago) 11d 10.244.137.73 master1 <none> <none>

etcd-master1 1/1 Running 2 (89m ago) 11d 192.168.7.180 master1 <none> <none>

kube-apiserver-master1 1/1 Running 2 (89m ago) 11d 192.168.7.180 master1 <none> <none>

kube-controller-manager-master1 1/1 Running 2 (89m ago) 11d 192.168.7.180 master1 <none> <none>

kube-proxy-6kdb8 1/1 Running 2 (89m ago) 11d 192.168.7.180 master1 <none> <none>

kube-proxy-gq9js 1/1 Running 2 (89m ago) 11d 192.168.7.184 node2 <none> <none>

kube-proxy-vjm2p 1/1 Running 2 (89m ago) 11d 192.168.7.183 node1 <none> <none>

kube-scheduler-master1 1/1 Running 2 (89m ago) 11d 192.168.7.180 master1 <none> <none>

[root@master1 prometheus-k8s]#

[root@master1 prometheus-k8s]#

node1\node2上传grafana8.45.tar

二、在master1节点上安装docker

[root@master1 ~]# yum install -y yum-utils device-mapper-persistent-data lvm2 【安装docker插件】

Rocky Linux 9 - BaseOS 1.0 kB/s | 4.1 kB 00:04

Rocky Linux 9 - BaseOS 141 kB/s | 2.5 MB 00:17

Rocky Linux 9 - AppStream 706 B/s | 4.5 kB 00:06[root@master1 ~]# yum config-manager --add-repo=https://mirrors.aliyun.com/docker-ce/linux/centos/docker-ce.repo 【下载阿里云的docker数据源】

Adding repo from: https://mirrors.aliyun.com/docker-ce/linux/centos/docker-ce.repo

[root@master1 ~]#[root@master1 ~]# dnf -y install docker-ce 【安装docker】

Docker CE Stable - x86_64 1.8 kB/s | 3.5 kB 00:01

Dependencies resolved.[root@master1 ~]# systemctl enable --now docker 【启动docker并设置开机启动】

Created symlink /etc/systemd/system/multi-user.target.wants/docker.service → /usr/lib/systemd/system/docker.service.[root@master1 ~]# vim /etc/docker/daemon.json 【创建docker镜像源的配置文件】

{

"registry-mirrors":[

"https://mirror.baidubce.com",

"https://9cpn8tt6.mirror.aliyuncs.com",

"https://registry.docker-cn.com",

"https://dockerproxy.com",

"https://mirror.baidubce.com",

"https://docker.m.daocloud.io",

"https://docker.nju.edu.cn",

"https://docker.1panel.live",

"https://hub.rat.dev",

"https://mirror-gcr.onrender.com",

"https://docker.mirrors.sjtug.sjtu.edu.cn",

"https://docker.mirrors.ustc.edu.cn",

"https://reg-mirror.qiniu.com",

"https://registry.docker-cn.com"

]

}[root@master1 ~]# systemctl restart docker 【重启docker】

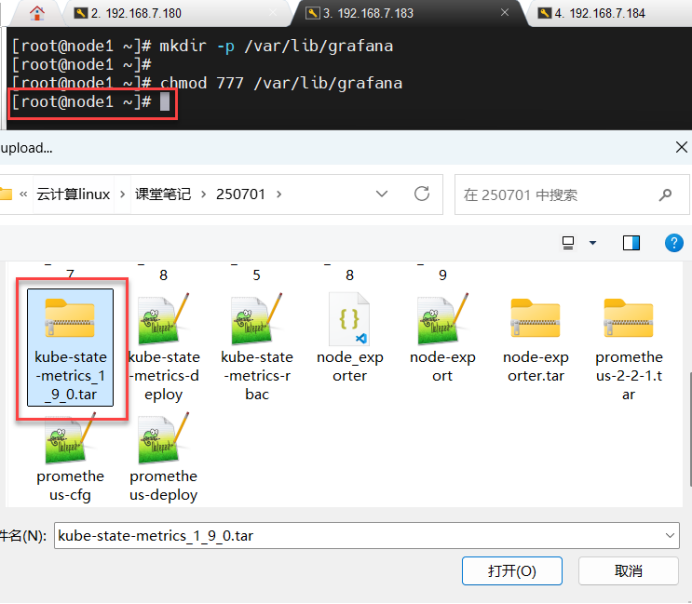

[root@master1 ~]#kube-state-metrics_1_9_0.tar 上传至node1\node2节点上

[root@node1 ~]# ctr -n k8s.io images import kube-state-metrics_1_9_0.tar.gz node1、node2导入kube-state-metrics的镜像

unpacking quay.io/coreos/kube-state-metrics:v1.9.0 (sha256:bf40aa1452dcefe34680c595995af1f4a72b7a480b3597fe863a3a5c4e8dde42)...done

[root@node1 ~]#[root@node2 ~]# ctr -n k8s.io images import kube-state-metrics_1_9_0.tar.gz node1、node2导入kube-state-metrics的镜像

unpacking quay.io/coreos/kube-state-metrics:v1.9.0 (sha256:bf40aa1452dcefe34680c595995af1f4a72b7a480b3597fe863a3a5c4e8dde42)...done

[root@node2 ~]#

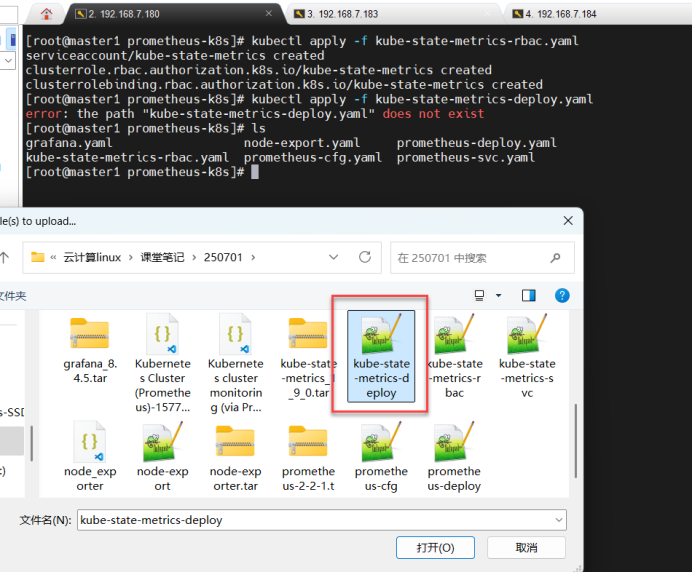

[root@master1 prometheus-k8s]# ls

grafana.yaml node-export.yaml prometheus-deploy.yaml

kube-state-metrics-rbac.yaml prometheus-cfg.yaml prometheus-svc.yaml

[root@master1 prometheus-k8s]#

[root@master1 prometheus-k8s]# kubectl apply -f kube-state-metrics-rbac.yaml #应用kube-state-metrics-rbac配置文件

#kube-state-metrics-rbac 配置文件的目的:创建sa,并对sa进行授权

serviceaccount/kube-state-metrics created

clusterrole.rbac.authorization.k8s.io/kube-state-metrics created

clusterrolebinding.rbac.authorization.k8s.io/kube-state-metrics created

[root@master1 prometheus-k8s]# ls

grafana.yaml kube-state-metrics-deploy.yaml 看这个 kube-state-metrics-rbac.yaml node-export.yaml prometheus-cfg.yaml prometheus-deploy.yaml prometheus-svc.yaml

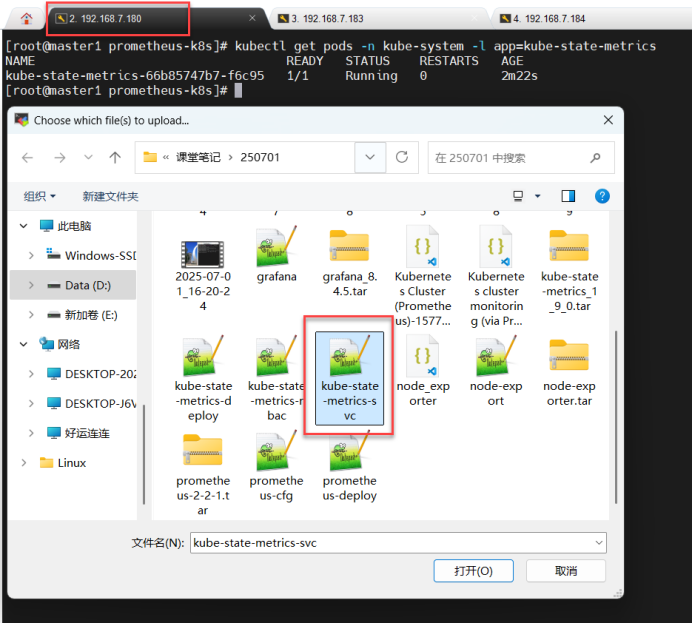

[root@master1 prometheus-k8s]# [root@master1 prometheus-k8s]# kubectl apply -f kube-state-metrics-deploy.yaml 应用kube-state-metrics无状态的配置文件

deployment.apps/kube-state-metrics created

[root@master1 prometheus-k8s]#[root@master1 prometheus-k8s]# kubectl get pods -n kube-system -l app=kube-state-metrics查看kube-state-metrics pod信息 NAME READY STATUS RESTARTS AGE

kube-state-metrics-66b85747b7-f6c95 1/1 Running 0 2m22s

[root@master1 prometheus-k8s]#

[root@master1 prometheus-k8s]# kubectl apply -f kube-state-metrics-svc.yaml 应用kube-state-metrics服务的配置文件

service/kube-state-metrics created

[root@master1 prometheus-k8s]#

[root@master1 prometheus-k8s]# kubectl get pods -n kube-system -l app=kube-state-metrics 查看kube-state-metrics的pod

NAME READY STATUS RESTARTS AGE

kube-state-metrics-66b85747b7-kxnn4 1/1 Running 0 8m54s [root@master1 prometheus-k8s]# kubectl get svc -n kube-system -l app=kube-state-met rics 查看kube-state-metrics的服务

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

kube-state-metrics ClusterIP 10.110.158.228 <none> 8080/TCP 74s

[root@master1 prometheus-k8s]#

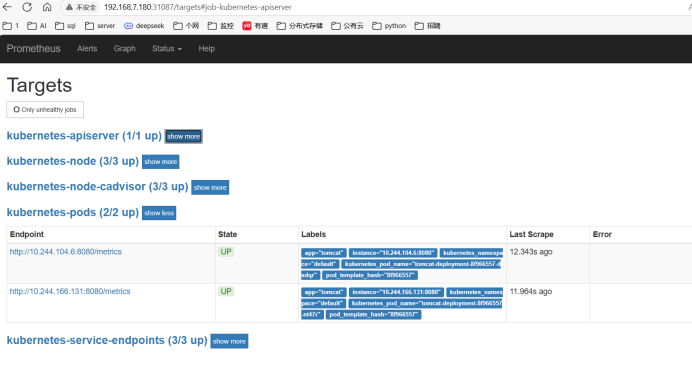

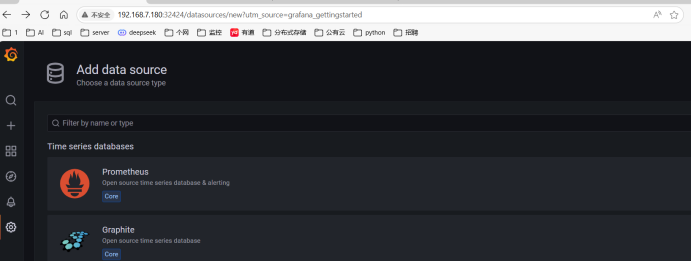

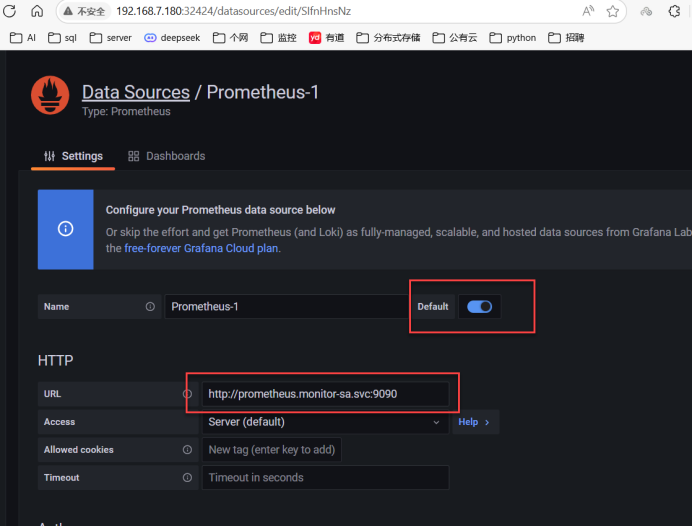

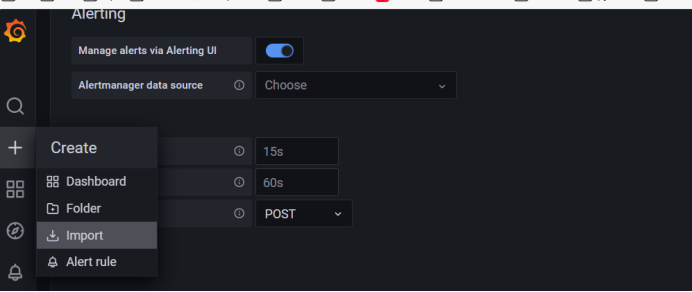

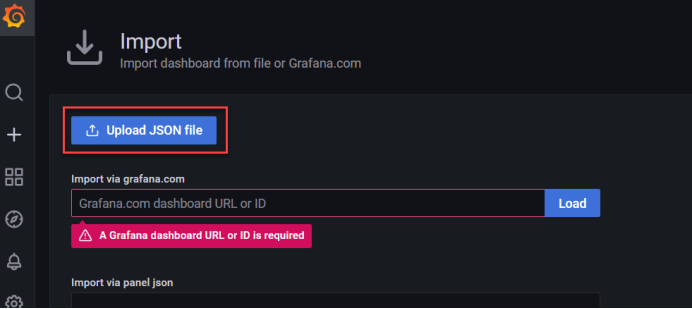

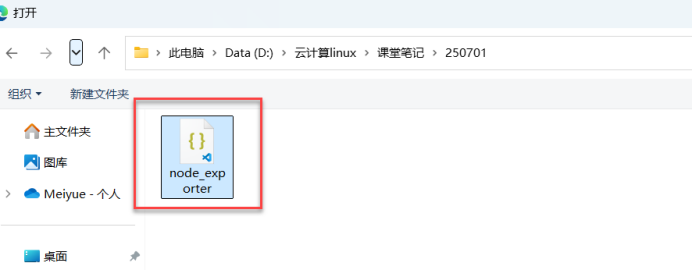

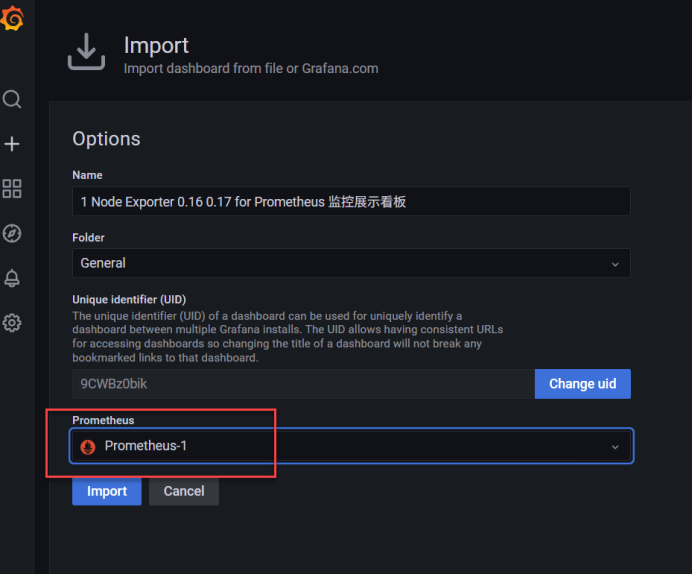

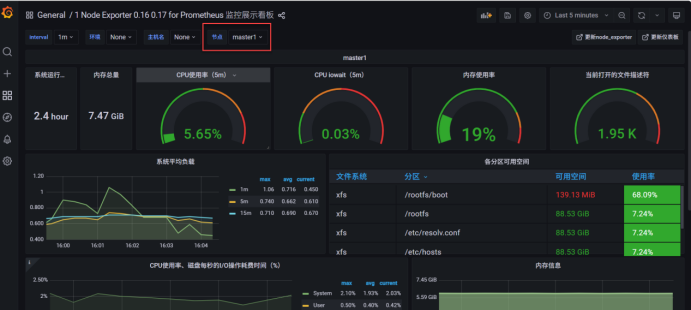

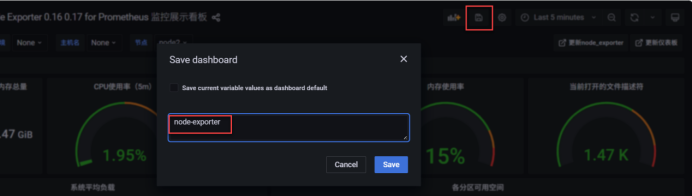

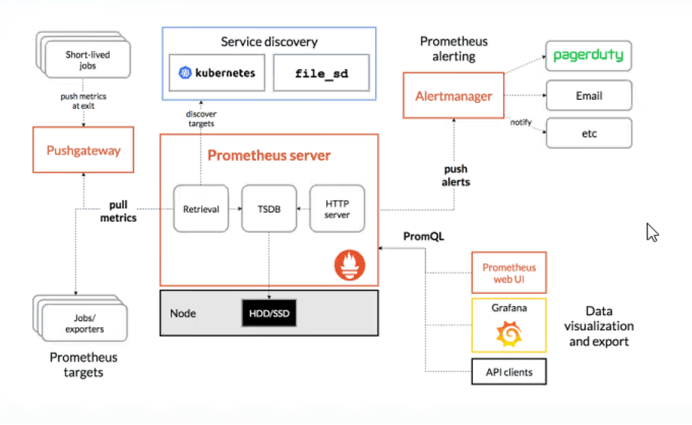

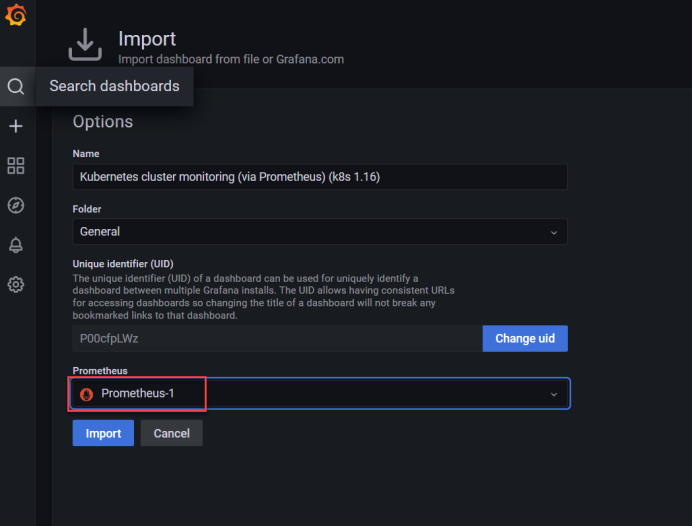

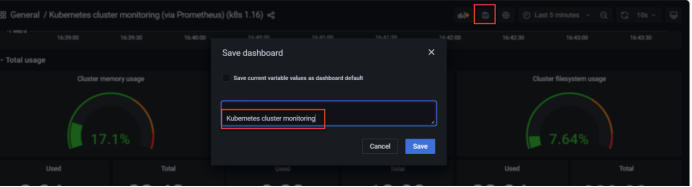

Prometheus和grafana配置完成

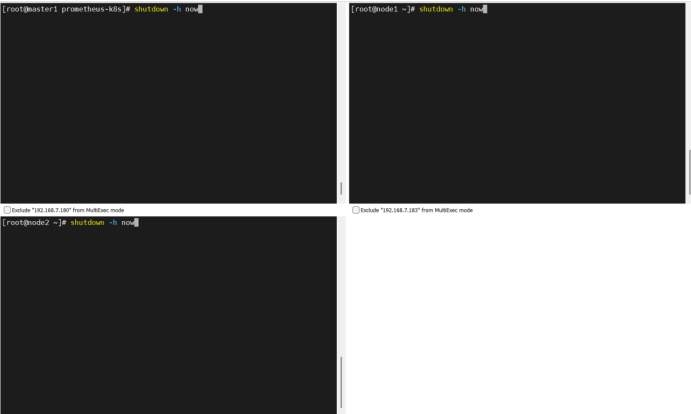

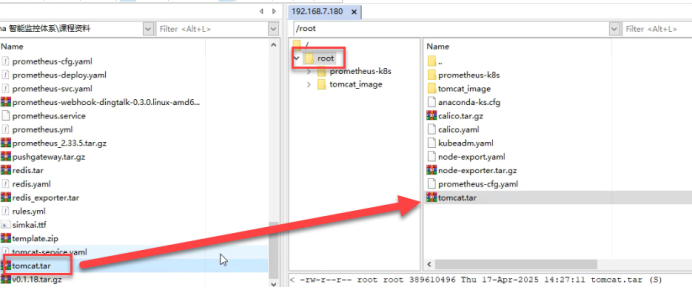

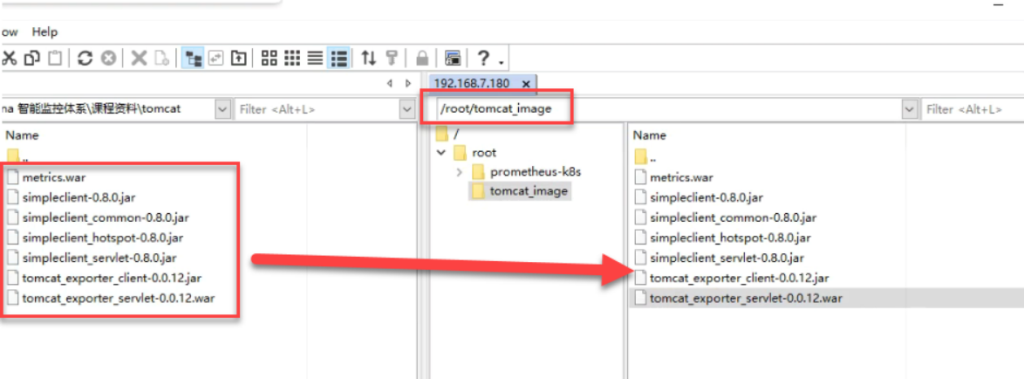

二、上传tomcat镜像文件到/root目录下

[root@master1 ~]# ls

anaconda-ks.cfg kube-state-metrics-rbac.yaml prometheus-deploy.yaml

calico.tar.gz node_exporter-1.5.0.linux-amd64.tar.gz prometheus-k8s

calico.yaml node-exporter.tar.gz tomcat_image 看这个

grafana.yaml node-export.yaml tomcat-service.yaml

kubeadm.yaml prometheus-cfg.yaml tomcat.tar

[root@master1 ~]# ctr -n k8s.io images import tomcat.tar 【导入tomcat的镜像文件】

unpacking docker.io/library/tomcat:8.5-jdk8-corretto (sha256:e6adebed2b21806e00be62aaec12d666fcfc5dfc8fd6ad42182da57c5a2bdc91)...done

[root@master1 ~]# cd tomcat_image/【进入tomcat的镜像目录】三、导入Dockefile文件及创建Dockerfile文件

创建tomact_image目录、将Dockerfile文件上传至tomact_image目录下

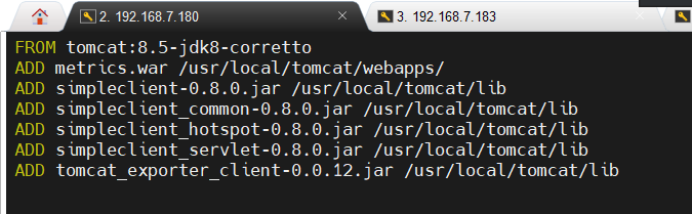

[root@master1 tomcat_image]# vim Dockerfile 【创建Dockerfile文件】

docker使用dockerfile创建tomcat_prometheus的镜像

[root@master1 tomcat_image]# docker build -t=tomcat_prometheus:v1 . 【docker使用dockerfile创建tomcat_prometheus的镜像】

[+] Building 59.1s (1/2) docker:default

=> [internal] load build definition from Dockerfile 0.0s

=> => transferring dockerfile: 395B 0.0s

=> [internal] load metadata for docker.io/library/tomcat:8.5-jdk8-corretto 59.1s[root@master1 tomcat_image]# cd

[root@master1 ~]# docker images 【查看docker镜像】

REPOSITORY TAG IMAGE ID CREATED SIZE

tomcat_prometheus v1 78cb0081dde3 50 seconds ago 382MB

[root@master1 ~]# docker save -o p_t.tar tomcat_prometheus:v1 【将tomcat_prometheus镜像保存到本地】四、在master1上,将tomcat_prometheus镜像保存文件远程复制到node1\ode2的root目录下

[root@master1 ~]# scp p_t.tar 192.168.7.183:/root 【将tomcat_prometheus镜像保存文件远程复制到183/184root目录下】

The authenticity of host '192.168.7.183 (192.168.7.183)' can't be established.

ED25519 key fingerprint is SHA256:zB5i7494g345upt8+lDCavhMxfvjaDe/X3Z+e+xK74g.

This host key is known by the following other names/addresses:

~/.ssh/known_hosts:10: node1

Are you sure you want to continue connecting (yes/no/[fingerprint])? yes

Warning: Permanently added '192.168.7.183' (ED25519) to the list of known hosts.

p_t.tar 100% 372MB 194.1MB/s 00:01

[root@master1 ~]# scp p_t.tar 192.168.7.184:/root 【将tomcat_prometheus镜像保存文件远程复制到183/184root目录下】

The authenticity of host '192.168.7.184 (192.168.7.184)' can't be established.

ED25519 key fingerprint is SHA256:khjoIzZZsh71bZ1a4vW4wvxh7a0TKmu6CCNY9Go6rWI.

This host key is known by the following other names/addresses:

~/.ssh/known_hosts:13: node2

Are you sure you want to continue connecting (yes/no/[fingerprint])? yes

Warning: Permanently added '192.168.7.184' (ED25519) to the list of known hosts.

p_t.tar 100% 372MB 218.3MB/s 00:01

[root@master1 ~]#五、node1\node2(7.183、7.184虚拟机)导入运行p_t.tar镜像

[root@node1 ~]# ls

anaconda-ks.cfg grafana_8.4.5.tar.gz prometheus-2-2-1.tar.gz

busybox-1-28.tar.gz kube-state-metrics_1_9_0.tar.gz prometheus-cfg.yaml

calico.tar.gz node-exporter.tar.gz p_t.tar 上传后,ls看这个

[root@node1 ~]# ctr -n k8s.io images import p_t.tar 导入运行p_t.tar镜像

unpacking docker.io/library/tomcat_prometheus:v1 (sha256:9e2677360aa41254308ec07157a4a57d513ff7989dac211072e9cc5f07cd9c83)...done

[root@node1 ~]#[root@node2 ~]# ls

anaconda-ks.cfg grafana_8.4.5.tar.gz prometheus-2-2-1.tar.gz

busybox-1-28.tar.gz kube-state-metrics_1_9_0.tar.gz prometheus-cfg.yaml

calico.tar.gz node-exporter.tar.gz p_t.tar 上传后,ls看这个

[root@node2 ~]# ctr -n k8s.io images import p_t.tar

unpacking docker.io/library/tomcat_prometheus:v1 (sha256:9e2677360aa41254308ec07157a4a57d513ff7989dac211072e9cc5f07cd9c83)...done

[root@node2 ~]#六、master1上创建无状态配置文件

[root@master1 ~]# vim deploy.yaml 【创建无状态服务的配置文件】 无状态服务的作用是将应用发布到网络上,为别人访问自己

apiVersion: apps/v1 【api的版本v1】

kind: Deployment 【类型:无状态部署服务】

metadata: 【源数据】

name: tomcat-deployment 【名称为:tomcat-deployment】

namespace: default 【名称空间为:(默认)】

spec: 【定义资源对象】

selector: 【调度器】

matchLabels: 【关联卷标】

app: tomcat 【名称为:tomcat】

replicas: 2 【副本数为2】# tells deployment to run 2 pods matching the template

template: 【模板】# create pods using pod definition in this template

metadata: 【源数据】

labels: 【卷标】

app: tomcat 【名称为:tomcat】

annotations: 【注释】

prometheus.io/scrape: 'true' 【用于指示prometheus对相关目标进行指标抓取】

spec: 【定义资源对象】

containers: 【容器】

- name: tomcat 【名称是:tomcat】

image: tomcat_prometheus:v1 【竟像是:tomcat_prometheus:v1】

ports: 【端口】

- containerPort: 8080 【容器端口号:8080】

securityContext: 【安全上下文】

privileged: true 【开启授权】[root@master1 ~]# kubectl apply -f deploy.yaml

deployment.apps/tomcat-deployment created 七、网络上测试访问,监控是否搭建好,是否能监控tomcat