一、实验环境:

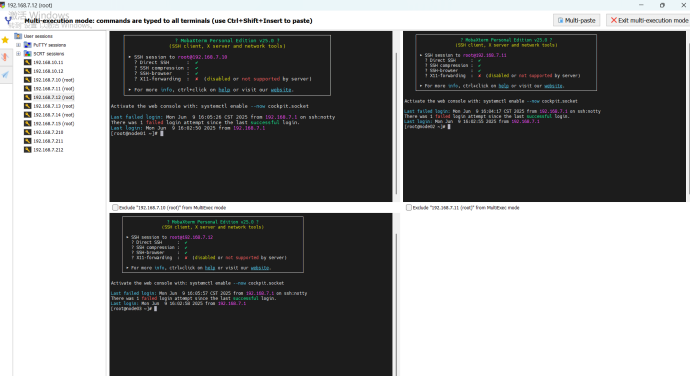

还原新系统后,创建3台docker,开机。

二、修改三台节点的host文件、修改三台节点名称:

Node1主节点,node2\node3工作节点

node01.benet.com

node02.benet.com

node03.benet.com

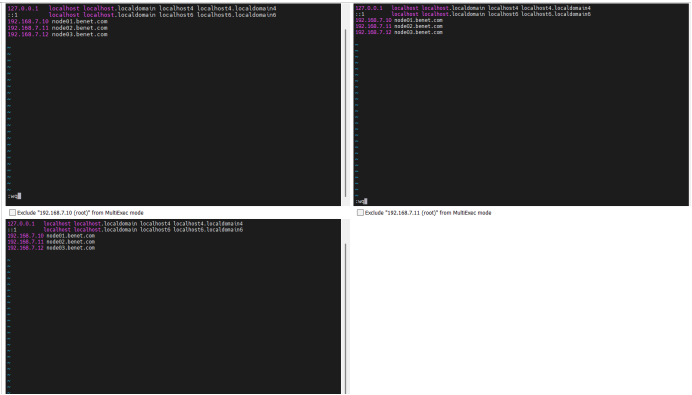

Vim /etc/hosts

192.168.7.10 node01.benet.com

192.168.7.11 node02.benet.com

192.168.7.12 node03.benet.com

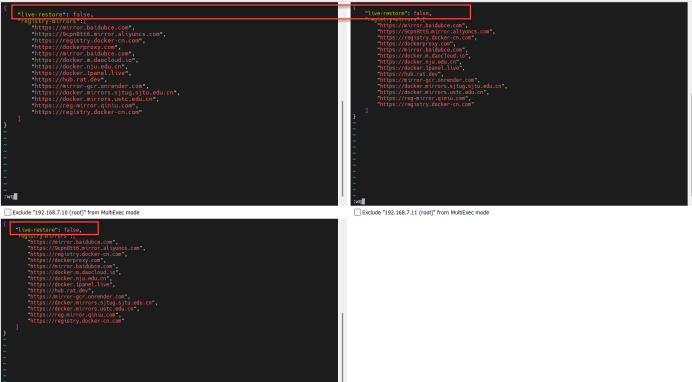

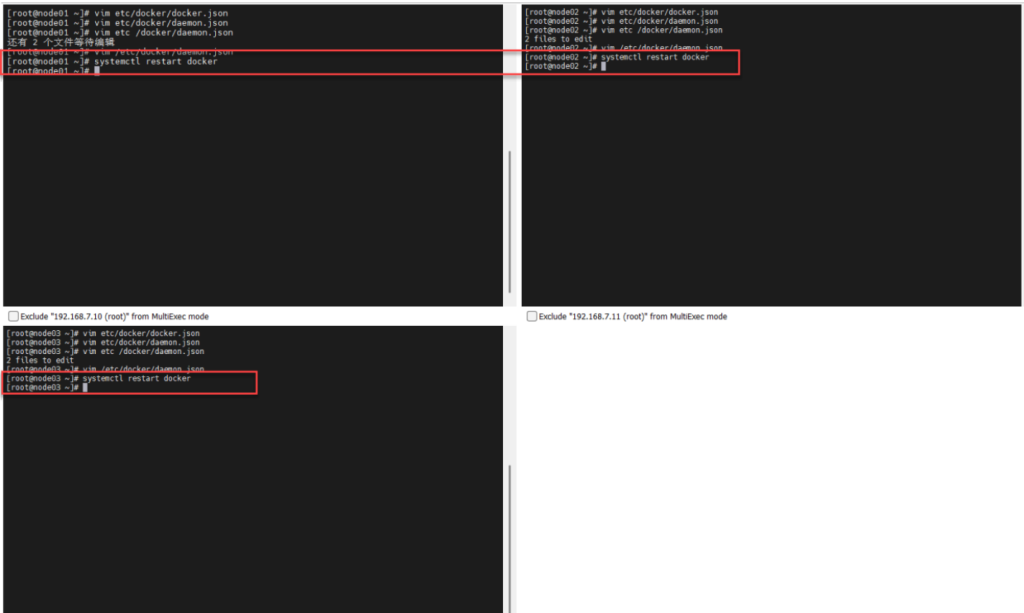

“live-restore”: false,

[root@node01 ~]# systemctl restart docker 重启docker三、初始化swarm集群、工作节点加入集群的命令

[root@node01 ~]# docker swarm init 初始化swarm集群

Swarm initialized: current node (0qre8ffdk6pkthiglkpkrqagt) is now a manager.

To add a worker to this swarm, run the following command:

docker swarm join --token SWMTKN-1-4hxebsjeyisyajtqm1ow8pp9p5rhlv9zs1paq32y78zmacp86 m-5ffv7jf0ns21vzfzmsly4lwg1 192.168.7.10:2377

To add a manager to this swarm, run 'docker swarm join-token manager' and follow the ins tructions. 工作节点加入集群的命令

[root@node01 ~]#四、将node02、node03节点加入到集群中

[root@node02 ~]# docker swarm join --token SWMTKN-1-4hxebsjeyisyajtqm1ow8pp9p5rhlv9zs1paq32y78zmacp86m-5ffv7jf0ns21vzfzmsly4lwg1 192.168.7.10:2377 This node joined a swarm as a worker. 将node2节点加入到集群中

[root@node02 ~]#

[root@node03 ~]# docker swarm join --token SWMTKN-1-4hxebsjeyisyajtqm1ow8pp9p5rhlv9zs1paq32y78zmacp86m-5ffv7jf0ns21vzfzmsly4lwg1 192.168.7.10:2377

This node joined a swarm as a worker. 将node3节点加入到集群中

[root@node03 ~]#五、在主节点node01上查看集群节点信息

[root@node01 ~]# docker node ls 查看集群节点信息

ID HOSTNAME STATUS AVAILABILITY MANAGER STATUS ENGINE VERSION

0qre8ffdk6pkthiglkpkrqagt * node01.benet.com Ready Active Leader 28.2.2

4c8s73esc17uq7ftieytj8c6f node02.benet.com Ready Active 28.2.2

ofqnmn4zfx5b9dmvbh99qvaj4 node03.benet.com Ready Active 28.2.2

[root@node01 ~]#[root@node01 ~]# docker node ls 查看集群节点信息

ID HOSTNAME STATUS AVAILABILITY MANAGER STATUS ENGINE VERSION

0qre8ffdk6pkthiglkpkrqagt * node01.benet.com Ready Active Leader 28.2.2

4c8s73esc17uq7ftieytj8c6f node02.benet.com Ready Active 28.2.2

ofqnmn4zfx5b9dmvbh99qvaj4 node03.benet.com Ready Active 28.2.2

[root@node01 ~]#六、三台机器做时间同步

[root@node01 ~]# vim Dockerfile 创建dockerfile文件

[root@node01 ~]#

FROM rockylinux:9.2 使用rocky9.2的镜像

MAINTAINER serverworld <admin@srv.world> 作者信息

RUN yum -y install httpd 执行命令安装阿帕奇

RUN echo "node01.benet.com" > /var/www/html/index.html 执行命令安装阿帕奇的测试页面

EXPOSE 80 显示80端口

CMD ["-D", "FOREGROUND"] 该容器启动时在后台运行

ENTRYPOINT ["/usr/sbin/httpd"] 配置容器 启动阿帕奇[root@node02 ~]# vim Dockerfile 创建dockerfile文件

FROM rockylinux:9.2 使用rocky9.2的镜像

MAINTAINER serverworld <admin@srv.world> 作者信息

RUN yum -y install httpd 执行命令安装阿帕奇

RUN echo "node02.benet.com" > /var/www/html/index.html 执行命令安装阿帕奇的测试页面

EXPOSE 80 显示80端口

CMD ["-D", "FOREGROUND"] 该容器启动时在后台运行

ENTRYPOINT ["/usr/sbin/httpd"] 配置容器 启动阿帕奇[root@node03 ~]# vim Dockerfile 创建dockerfile文件

FROM rockylinux:9.2 使用rocky9.2的镜像

MAINTAINER serverworld <admin@srv.world> 作者信息

RUN yum -y install httpd 执行命令安装阿帕奇

RUN echo "node03.benet.com" > /var/www/html/index.html 执行命令安装阿帕奇的测试页面

EXPOSE 80 显示80端口

CMD ["-D", "FOREGROUND"] 该容器启动时在后台运行

ENTRYPOINT ["/usr/sbin/httpd"] 配置容器 启动阿帕奇七、查看三台机器上是否有web_server 镜像、查看正在运行的容器

[root@node01 ~]# docker images 查看docker镜像

REPOSITORY TAG IMAGE ID CREATED S IZE

web_server latest ab2aa9effc56 20 hours ago 2 34MB

nginx 1.26.0 94543a6c1aef 13 months ago 1 88MB

[root@node01 ~]# docker run -d -p 80:80 web_server server docker使用web_server的镜像在后台生成web_server的镜像,建立端口映射,宿主机的80映射到容器的80

840571d66c9782472a85cc781a14436eb54fef3a96af 175010c9c43b49fb9d6f

[root@node01 ~]# docker ps 查看正在运行的容器

CONTAINER ID IMAGE COMMAND CREATED STATUS P ORTS NAMES

840571d66c97 web_server "/usr/sbin/httpd -D …" 14 seconds ago Up 13 seconds 0. 0.0.0:80->80/tcp, [::]:80->80/tcp xenodoch ial_mahavira

[root@node01 ~]#

[root@node02 ~]# docker images

REPOSITORY TAG IMAGE ID CREATED SIZE

web_server latest 69d72e4babdf 20 hours ago 234MB

nginx 1.26.0 94543a6c1aef 13 months ago 188MB

[root@node02 ~]# docker run -d -p 80:80 web_server

ac395c32e369f4185425b9b639401bc66382a9e0c4e0efa91b9d1b1223f116 99

[root@node02 ~]# docker ps

CONTAINER ID IMAGE COMMAND CREATED STATUS PORTS NAMES

ac395c32e369 web_server "/usr/sbin/httpd -D …" 15 second s ago Up 14 seconds 0.0.0.0:80->80/tcp, [::]:80->80/tcp gallant_hypatia

[root@node02 ~]#

[root@node03 ~]# docker images

REPOSITORY TAG IMAGE ID CREATED S IZE

web_server latest 0dd4dd1bcad2 20 hours ago 2 34MB

nginx 1.26.0 94543a6c1aef 13 months ago 1 88MB

[root@node03 ~]# docker run -d -p 80:80 web_server server

4c06120c95a63d5a0c772dd68a6d132684030ccdf10d 1141e4b551b24500bc56

[root@node03 ~]# docker ps

CONTAINER ID IMAGE COMMAND CREATED STATUS PO RTS NAMES

4c06120c95a6 web_server "/usr/sbin/httpd -D …" 23 seconds ago Up 22 seconds 0. 0.0.0:80->80/tcp, [::]:80->80/tcp pedantic _chandrasekhar

[root@node03 ~]#八、主节点node01 docker服务创建swarm集群

[root@node01 ~]# docker service create --name swarm_cluster --replicas=2 -p 80:80 web_server:latest docker服务创建swarm集群

--replicas=2 副本数2 并建立端口映射,宿主机的80映射到容器的80[root@node01 ~]# docker service ls 查看docker服务

ID NAME MODE REPLICAS IMAGE PORTS

rd3od6qm3a07 swarm_cluster replicated 2/2 web_server:latest *:80->80/tcp

[root@node01 ~]# docker service ps swarm_cluster

ID NAME IMAGE NODE DESIRED STATE CURRENT STATE ERROR PORTS

z9w8zhw0zzlo swarm_cluster.1 web_server:latest node01.benet.com Running Running about a minute ago

qoa06btby7jq swarm_cluster.2 web_server:latest node03.benet.com Running Running 2 minutes ago

[root@node01 ~]# docker service inspect swarm_cluster --pretty 查看swarm集群的详细信息

ID: rd3od6qm3a075699chke8u8g3

Name: swarm_cluster

Service Mode: Replicated

Replicas: 2

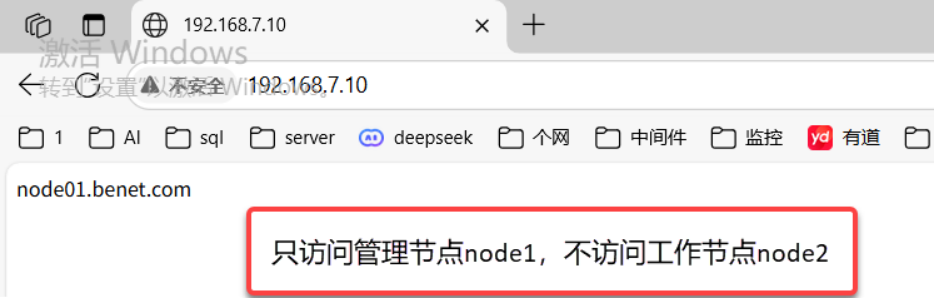

Placement:[root@node01 ~]# curl 192.168.7.10 访问主节点IP

node01.benet.com

[root@node01 ~]# curl 192.168.7.10 采用轮询

node03.benet.com

[root@node01 ~]# curl 192.168.7.10 采用轮询

node01.benet.com

[root@node01 ~]# curl 192.168.7.10 采用轮询

node03.benet.com

[root@node01 ~]# curl 192.168.7.10

node01.benet.com

[root@node01 ~]# curl 192.168.7.10

node03.benet.com

[root@node01 ~]# curl 192.168.7.10

node01.benet.com

[root@node01 ~]#九、测试访问node1管理节点,是否轮询

只有两个节点node1,node3

十、扩展集群:将swarm集群扩展为3台

[root@node01 ~]# docker service scale swarm_cluster=3 将swarm集群扩展为3台

swarm_cluster scaled to 3

overall progress: 2 out of 3 tasks

1/3: running [==================================================>]

2/3: running [==================================================>]

3/3: preparing [=================================> ][root@node01 ~]# docker service ps swarm_cluster 查看正在运行的swarm集群(01、02、03)

ID NAME IMAGE NODE DESIRED STATE CURRENT STATE ERROR PORTS

c79c0t65bkif swarm_cluster.1 web_server:latest node01.benet.com Running Running 12 minutes ago

rg5ygj87yz1w swarm_cluster.2 web_server:latest node02.benet.com Running Running 12 minutes ago

gn3nygv9kooi swarm_cluster.3 web_server:latest node03.benet.com Running Running 18 seconds ago

[root@node01 ~]# curl 192.168.7.10 测试 访问主节点IP

node02.benet.com

[root@node01 ~]# curl 192.168.7.10 轮询(采用)

node03.benet.com

[root@node01 ~]# curl 192.168.7.10

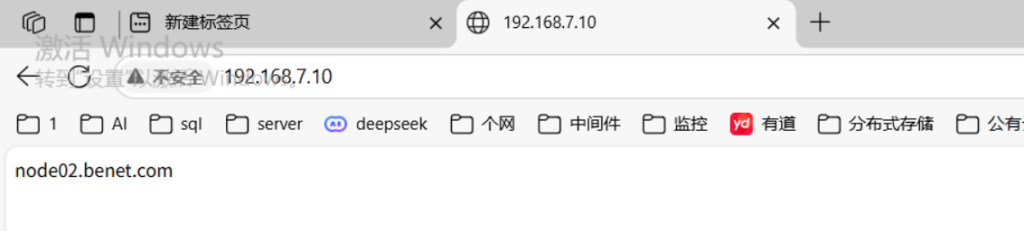

node01.benet.com十一、测试集群里三台机器是否添加上并且轮询

实验二、如果还有额外节点加入集群的配置

一、实验环境:

再安装一台7.13命名为node04节点4

二、配置思路:

1、修改计算机名

2、修改所有节点的host文件

3、进行时间同步

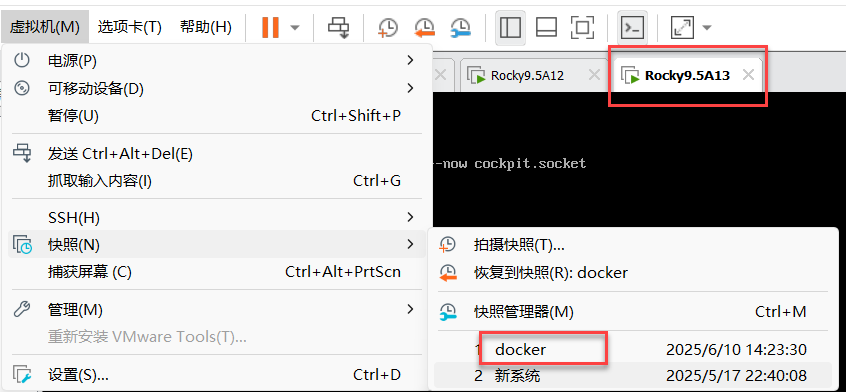

4、在要加入集群节点的主机上,安装docker;(如:节点04上安装docker,并拍摄快照名为:docker;)

5、在新结点上创建docker文件,根据docker文件,创建web_server的镜像

6、将web_server镜像,在后台生成容器

三、修改计算机名

[root@Server13 ~]# hostnamectl set-hostname node04.benet.com

[root@Server13 ~]# exit

logout四、安装docker,并拍摄快照名为:docker;

[root@Server13 ~]#yum install -y yum-utils device-mapper-persistent-data lvm2

Rocky Linux 9 - BaseOS 507 kB/s | 2.5 MB 00:04

Rocky Linux 9 - AppStream 2.7 MB/s | 9.6 MB 00:03

Rocky Linux 9 - Extras 9.9 kB/s | 16 kB 00:01

Package device-mapper-persistent-data-1.0.9-3.el9_4.x86_64 is already installed.

Package lvm2-9:2.03.24-2.el9.x86_64 is already installed.

[root@Server13 ~]# yum config-manager --add-repo=https://mirrors.aliyun.com/docker-ce/linux/centos/docker-ce.repo

Adding repo from: https://mirrors.aliyun.com/docker-ce/linux/centos/docker-ce.repo

[root@Server13 ~]# dnf -y install docker-ce

Docker CE Stable - x86_64 73 kB/s | 73 kB 00:01

Dependencies resolved.

[root@Server13 ~]# systemctl enable --now docker

Created symlink /etc/systemd/system/multi-user.target.wants/docker.service → /usr/lib/systemd/system/docker.servi ce.

[root@Server13 ~]# vim /etc/docker/daemon.json 编辑docker进程的配置文件

{

"registry-mirrors":[ 表示dockers不起用实时恢复功能

"https://mirror.baidubce.com", 守护进程重启时,所有运行的容器会被强制终止

"https://9cpn8tt6.mirror.aliyuncs.com",

"https://registry.docker-cn.com",

"https://dockerproxy.com",

"https://mirror.baidubce.com",

"https://docker.m.daocloud.io",

"https://docker.nju.edu.cn",

"https://docker.1panel.live",

"https://hub.rat.dev",

"https://mirror-gcr.onrender.com",

"https://docker.mirrors.sjtug.sjtu.edu.cn",

"https://docker.mirrors.ustc.edu.cn",

"https://reg-mirror.qiniu.com",

"https://registry.docker-cn.com"

]

}

[root@Server13 ~]# systemctl restart docker 重启docker

[root@Server13 ~]# shutdown -h now 初始化swarm集群

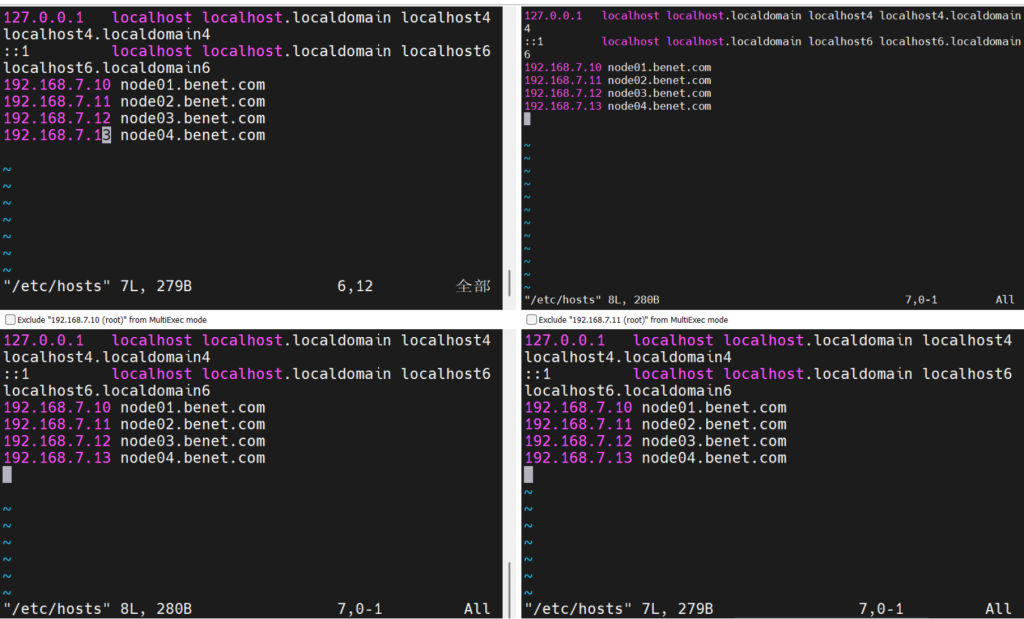

五、修改所有节点的host文件

[root@node01 ~]# vim /etc/hosts

[root@node02 ~]# vim /etc/hosts

[root@node03 ~]# vim /etc/hosts

[root@node04 ~]# vim /etc/hosts

192.168.7.10 node01.benet.com

192.168.7.11 node02.benet.com

192.168.7.12 node03.benet.com

192.168.7.13 node04.benet.com[root@node01 ~]# docker swarm join-token manager 查看工作节点加入swarm集群的命令

To add a manager to this swarm, run the following command:

docker swarm join --token SWMTKN-1-4hxebsjeyisyajtqm1ow8pp9p5rhlv9zs1paq32y78zmacp86m-72azhfad1z3n2rruvcj9s4ng3 192.168.7.10:2377

This node joined a swarm as a manager. 将新节点加入到集群中

[root@node01 ~]#

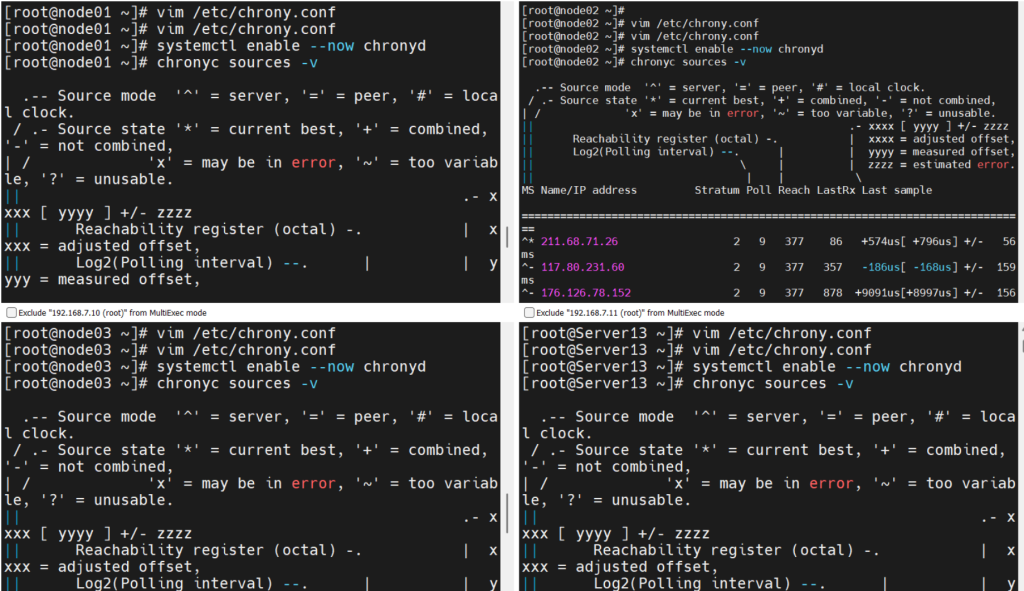

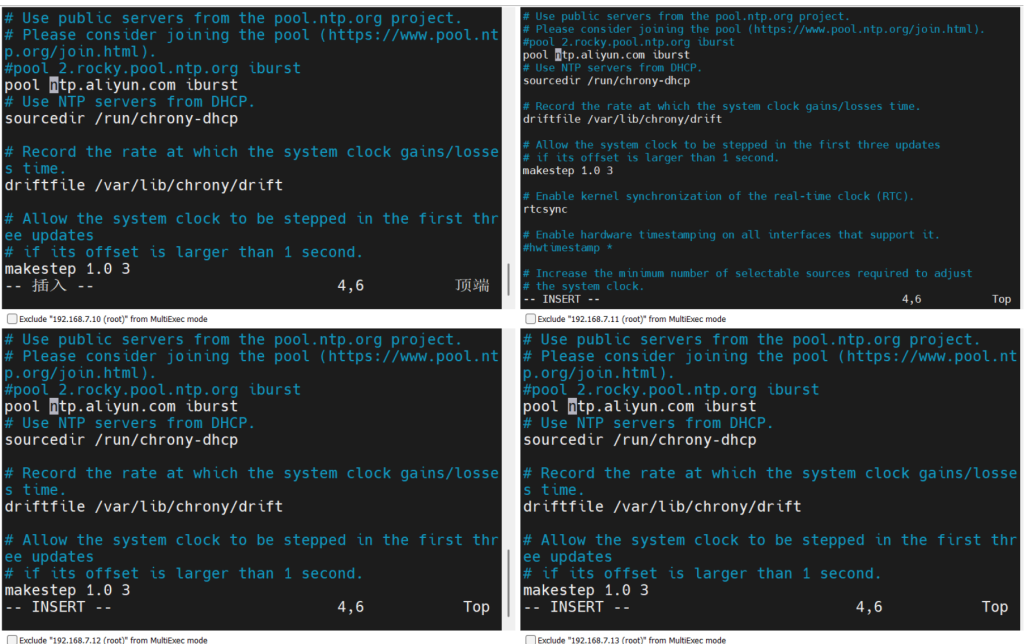

六、node01、node02、node03、node04进行时间同步

#pool 2.rocky.pool.ntp.org iburst

pool ntp.aliyun.com iburst[root@node01 ~]# systemctl enable --now chronyd

[root@node01 ~]# chronyc sources -v

[root@node01 ~]# date

2025年 06月 10日 星期二 14:35:25 CST

[root@node01 ~]# clock -w[root@node02 ~]# systemctl enable --now chronyd

[root@node02 ~]# chronyc sources -v

[root@node02 ~]# date

2025年 06月 10日 星期二 14:35:25 CST

[root@node02 ~]# clock -w[root@node03 ~]# systemctl enable --now chronyd

[root@node03 ~]# chronyc sources -v

[root@node03 ~]# date

2025年 06月 10日 星期二 14:35:25 CST

[root@node03 ~]# clock -w[root@node04 ~]# systemctl enable --now chronyd

[root@node04 ~]# chronyc sources -v

[root@node04 ~]# date

2025年 06月 10日 星期二 14:35:25 CST

[root@node04 ~]# clock -w七、node1主节点将Docker文件给node04节点

[root@node01 ~]# scp Dockerfile 192.168.7.13:/root 复制Docker文件给node04节点[root@node01 ~]# ls

anaconda-ks.cfg Dockerfile

[root@node01 ~]# scp Dockerfile 192.168.7.13:/root

The authenticity of host ‘192.168.7.13 (192.168.7.13)’

ED25519 key fingerprint is SHA256:H7LflkvVNKIcCNphIsXrJ

This key is not known by any other names

Are you sure you want to continue connecting (yes/no/[f

Warning: Permanently added ‘192.168.7.13’ (ED25519) to

root@192.168.7.13’s password:

Dockerfile

[root@node01 ~]#

八、在node04上编辑Dockerfile文件

[root@node04 ~]# vim Dockerfile

[root@node04 ~]#

FROM rockylinux:9.2

MAINTAINER serverworld <admin@srv.world>

RUN yum -y install httpd

RUN echo "node04.benet.com" > /var/www/html/index.html

EXPOSE 80

CMD ["-D", "FOREGROUND"]

ENTRYPOINT ["/usr/sbin/httpd"]

~

~九、docker使用dockerfile文件创建web_server的镜像

[root@node04 ~]# docker build -t web_server:latest . docker使用dockerfile文件创建web_server的镜像

[+] Building 13.8s (1/2) docker:default

=> [internal] load build definition from Dockerfile 0.0s

=> => transferring dockerfile: 246B 0.0s

=> WARN: MaintainerDeprecated: Maintainer instruction is deprecated in favor of using label (line 2) 0.0s

=> [root@node04 ~]# docker swarm join --token SWMTKN-1-4hxebsjeyisyajtqm1ow8pp9p5rhlv9zs1paq32y78zmacp86m-72azhfad1z3n2rruvcj9s4ng3 192.168.7.10:2377 将新节点加入到集群中(从node1复制过来的)

Error response from daemon: manager stopped: can't initialize raft node: rpc error: code = Unavailable desc = could not connect to prospective new cluster member using its advertised address: rpc error: code = Unavailable desc = connection error: desc = "transport: Error while dialing: dial tcp 192.168.7.13:2377: connect: no route to host"

[root@node04 ~]# systemctl status firewalld

● firewalld.service - firewalld - dynamic firewall daemon

Loaded: loaded (/usr/lib/systemd/system/firewalld.service; enabled; preset: enabled)

Active: active (running) since Tue 2025-06-10 22:07:12 CST; 38min ago

Docs: man:firewalld(1)

Main PID: 761 (firewalld)

Tasks: 2 (limit: 22925)

Memory: 45.3M

CPU: 744ms

CGroup: /system.slice/firewalld.service

└─761 /usr/bin/python3 -s /usr/sbin/firewalld --nofork --nopid

Jun 10 22:07:13 node04.benet.com firewalld[761]: WARNING: COMMAND_FAILED: '/usr/sbin/ip6tables -w10 -t filter -F>

Jun 10 22:07:13 node04.benet.com firewalld[761]: WARNING: COMMAND_FAILED: '/usr/sbin/ip6tables -w10 -t filter -X>

Jun 10 22:07:13 node04.benet.com firewalld[761]: WARNING: COMMAND_FAILED: '/usr/sbin/ip6tables -w10 -t filter -F>

Jun 10 22:07:13 node04.benet.com firewalld[761]: WARNING: COMMAND_FAILED: '/usr/sbin/ip6tables -w10 -t filter -X>

Jun 10 22:07:13 node04.benet.com firewalld[761]: WARNING: COMMAND_FAILED: '/usr/sbin/ip6tables -w10 -t filter -F>

Jun 10 22:07:13 node04.benet.com firewalld[761]: WARNING: COMMAND_FAILED: '/usr/sbin/ip6tables -w10 -t filter -X>

Jun 10 22:07:13 node04.benet.com firewalld[761]: WARNING: COMMAND_FAILED: '/usr/sbin/ip6tables -w10 -t filter -F>

Jun 10 22:07:13 node04.benet.com firewalld[761]: WARNING: COMMAND_FAILED: '/usr/sbin/ip6tables -w10 -t filter -X>

Jun 10 22:07:13 node04.benet.com firewalld[761]: WARNING: COMMAND_FAILED: '/usr/sbin/ip6tables -w10 -t filter -F>

Jun 10 22:07:13 node04.benet.com firewalld[761]: WARNING: COMMAND_FAILED: '/usr/sbin/ip6tables -w10 -t filter -X>

[root@node04 ~]# systemctl stop firewalld

[root@node04 ~]# docker swarm join --token SWMTKN-1-4hxebsjeyisyajtqm1ow8pp9p5rhlv9zs1paq32y78zmacp86m-72azhfad1z3n2rruvcj9s4ng3 192.168.7.10:2377

This node joined a swarm as a manager. 将新节点加入到集群中

[root@node04 ~]#

[root@node01 ~]# docker node ls 在主节点node01查看集群信息

ID HOSTNAME STATUS AVAILABILITY MANAGER STATUS ENGINE VERSION

tagtz3l8wzcfldglq4ctn1vtk Unknown Active

tzief1hhk4knlk9ap5aags2rr * node01.benet.com Ready Active Leader 27.4.1

i21ublrvu993qpj8ji2ryuc97 node02.benet.com Ready Active 27.4.1

3ppty8565te0g7f34byoyp7x8 node03.benet.com Ready Active 28.2.2

33ati9i1fayisxjjyjofckg6s node04.benet.com Ready Active Reachable 28.1.1

[root@node01 ~]# docker service scale swarm_cluster=4 将swarm 集群节点扩展至4台

swarm_cluster scaled to 4

overall progress: 4 out of 4 tasks

1/4: running

2/4: running

3/4: running

4/4: running

verify: Service swarm_cluster converged

[root@node01 ~]# docker service ps swarm_cluster 查看正在运行的集群(是否为4台)

ID NAME IMAGE NODE DESIRED STATE CURRENT STATE ERROR PORTS

c79c0t65bkif swarm_cluster.1 web_server:latest node01.benet.com Running Running less than a second ago

rg5ygj87yz1w swarm_cluster.2 web_server:latest node02.benet.com Running Running less than a second ago

gn3nygv9kooi swarm_cluster.3 web_server:latest node03.benet.com Running Running less than a second ago

pwiltd0ke24w swarm_cluster.4 web_server:latest node04.benet.com Running Running about a minute ago

[root@node01 ~]# curl 192.168.7.10 访问主节点IP

node01.benet.com

[root@node01 ~]# curl 192.168.7.10 采用轮询

node02.benet.com

[root@node01 ~]# curl 192.168.7.10 采用轮询

node04.benet.com

[root@node01 ~]# curl 192.168.7.10 采用轮询

node03.benet.com十、删除节点和集群

[root@node01 ~]# docker node update --availability drain i21ublrvu993qpj8ji2ryuc97 将该节点停用

i21ublrvu993qpj8ji2ryuc97

[root@node01 ~]# docker swarm leave 离开节点

[root@node01 ~]#

[root@node01 ~]# docker node rm 6whtoqrhkzv3ax4xy9ab20gmy 删除 node节点(在 master 上操作)

添加删除节点

[root@node01 ~]# docker swarm init 初始化swarm集群

Swarm initialized: current node (bvp2xk3ju7wh9bff5kar63rrp) is now a manager.

To add a worker to this swarm, run the following command:

docker swarm join --token SWMTKN-1-247tqn51drak1zu683haeunra0mqbgidg1xad0l4sjqszbmao7-bda3i3e1ivl1awsbxh3xbfp9k 192.168.7.10:2377

To add a manager to this swarm, run 'docker swarm join-token manager' and follow the instructions.