知识点:

K8s下的Ingress-Nginx控制器:生产环境实战配置指南相关:nginx和tomact的实验

实验环境:五台虚拟机还原至高可用集群

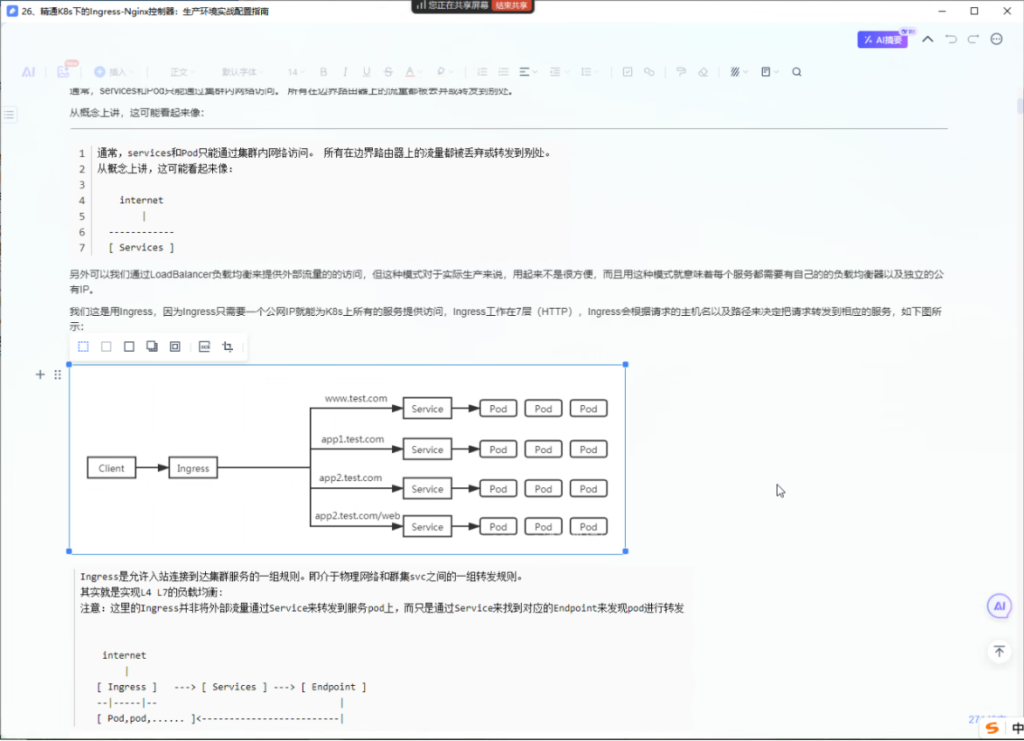

Ingress Controller工作原理

为了使得Ingress资源正常工作,集群中必须要有个Ingress Controller来解析Ingress的转发规则。Ingress Controller收到请求,匹配Ingress转发规则转发到后端Service,而Service转发到Pod,最终由Pod处理请求。Kubernetes中Service、Ingress与Ingress Controller有着以下关系:

Service是后端真实服务的抽象,一个Service可以代表多个相同的后端服务。

Ingress是反向代理规则,用来规定HTTP/HTTPS请求应该被转发到哪个Service上。例如根据请求中不同的Host和URL路径,让请求落到不同的 Service上。

Ingress Controller是一个反向代理程序,负责解析Ingress的反向代理规则。如果Ingress有增删改的变动,Ingress Controller会及时更新自己相应的转发规则,当Ingress Controller收到请求后就会根据这些规则将请求转发到对应的Service。

Ingress Controller通过API Server获取Ingress资源的变化,动态地生成Load Balancer(例如Nginx)所需的配置文件(例如nginx.conf),然后重新加载Load Balancer(例如执行nginx -s load重新加载Nginx。)来生成新的路由转发规则。

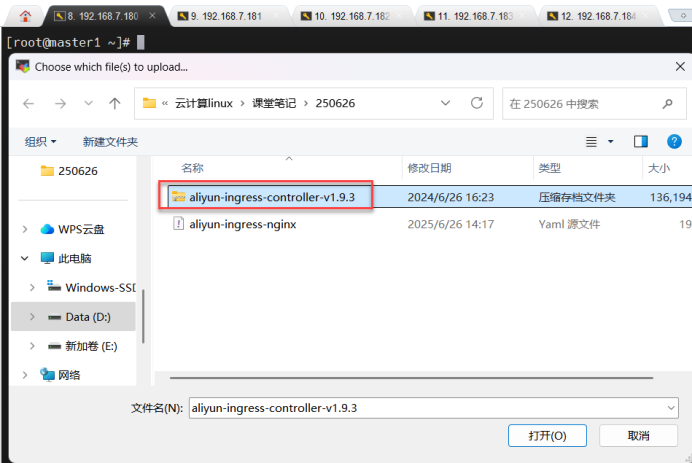

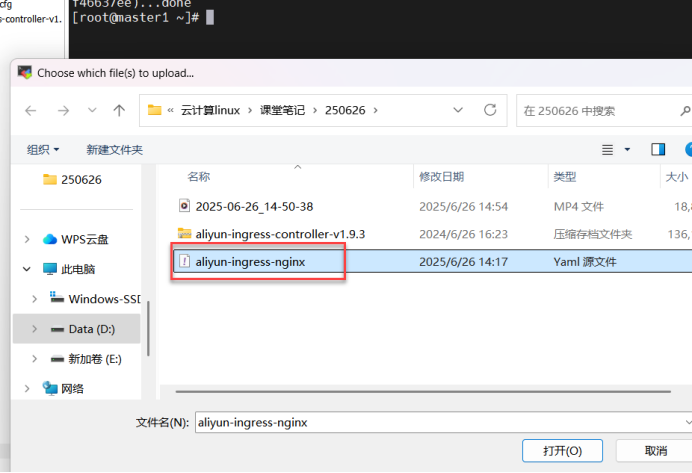

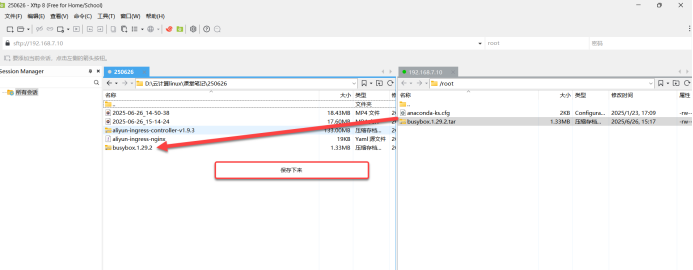

所有节点上传nginx-ingress controller文件:

一、所有节点都导入Nginx-ingress镜像,jingress做反向代理的

[root@1-5 ~]# ctr -n k8s.io images import aliyun-ingress-controller-v1.9.3.tar

所有节点都导入Nginx-ingress镜像,jingress做反向代理的

unpacking registry-cn-hangzhou.ack.aliyuncs.com/acs/aliyun-ingress-controller:v1.9.3-aliyun.1 (sha256:04cb236ebf4dd1f868399cb79425027d64f6e4d95a38a980cf753573f46637ee)...done

[root@master1 ~]#

[root@master1 ~]# ls

aliyun-ingress-controller-v1.9.3.tar calico.tar.gz kubeadm.yaml

aliyun-ingress-nginx.yaml calico.yaml nginx-ingress-controller_v1.11.2.tar

anaconda-ks.cfg kubeadm-init.log

[root@master1 ~]#二、应用aliyun ingress配置文件

[root@master1 ~]# kubectl apply -f aliyun-ingress-nginx.yaml

应用aliyun ingress配置文件

serviceaccount/ingress-nginx created

role.rbac.authorization.k8s.io/ingress-nginx created

clusterrole.rbac.authorization.k8s.io/ingress-nginx created

rolebinding.rbac.authorization.k8s.io/ingress-nginx created

clusterrolebinding.rbac.authorization.k8s.io/ingress-nginx created

service/nginx-ingress-lb created

service/ingress-nginx-controller-admission created

configmap/nginx-configuration created

configmap/tcp-services created

configmap/udp-services created

daemonset.apps/nginx-ingress-controller created

ingressclass.networking.k8s.io/nginx-master created

validatingwebhookconfiguration.admissionregistration.k8s.io/ingress-nginx-admission created

serviceaccount/ingress-nginx-admission created

clusterrole.rbac.authorization.k8s.io/ingress-nginx-admission created

clusterrolebinding.rbac.authorization.k8s.io/ingress-nginx-admission created

role.rbac.authorization.k8s.io/ingress-nginx-admission created

rolebinding.rbac.authorization.k8s.io/ingress-nginx-admission created

job.batch/ingress-nginx-admission-create created

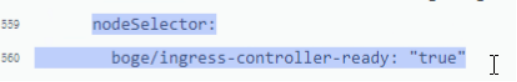

job.batch/ingress-nginx-admission-patch created配置文件里要求打标签,后才能显示

三、给Node1、Node2节点打标签:

[root@master1 ~]# kubectl label node node1 boge/ingress-controller-ready=true 给Node1节点打标签

node/node1 labeled

[root@master1 ~]# kubectl label node node2 boge/ingress-controller-ready=true 给Node2节点打标签

node/node2 labeled四、获取Kubernetes集群中kube-system命名空间下的所有Pod资源信息

[root@master1 ~]# kubectl -n kube-system get pod 获取Kubernetes集群中kube-system命名空间下的所有Pod资源信息

NAME READY STATUS RESTARTS AGE

calico-kube-controllers-7dc5458bc6-c9pdn 1/1 Running 1 (16m ago) 2d4h

calico-node-7ztlx 1/1 Running 1 (16m ago) 2d4h

calico-node-86w2s 1/1 Running 1 (17m ago) 2d4h

calico-node-fp6r7 1/1 Running 1 (16m ago) 2d4h

calico-node-fwv59 1/1 Running 1 (16m ago) 2d4h

calico-node-xbwwc 1/1 Running 1 (16m ago) 2d4h

coredns-7c445c467-6dlt5 1/1 Running 1 (16m ago) 2d6h

coredns-7c445c467-wjskw 1/1 Running 1 (16m ago) 2d6h

etcd-master1 1/1 Running 2 (17m ago) 2d6h

etcd-master2 1/1 Running 2 (16m ago) 2d6h

etcd-master3 1/1 Running 2 (16m ago) 2d6h

ingress-nginx-admission-create-z4f9m 0/1 Completed 0 91s

ingress-nginx-admission-patch-bvfv5 0/1 Completed 0 91s

kube-apiserver-master1 1/1 Running 2 (17m ago) 2d6h

kube-apiserver-master2 1/1 Running 2 (16m ago) 2d6h

kube-apiserver-master3 1/1 Running 2 (16m ago) 2d6h

kube-controller-manager-master1 1/1 Running 2 (17m ago) 2d6h

kube-controller-manager-master2 1/1 Running 2 (16m ago) 2d6h

kube-controller-manager-master3 1/1 Running 2 (16m ago) 2d6h

kube-proxy-46hzl 1/1 Running 1 (16m ago) 2d5h

kube-proxy-4x9w7 1/1 Running 1 (16m ago) 2d5h

kube-proxy-h2rl5 1/1 Running 2 (16m ago) 2d6h

kube-proxy-htzg7 1/1 Running 2 (17m ago) 2d6h

kube-proxy-sc6fg 1/1 Running 2 (16m ago) 2d6h

kube-scheduler-master1 1/1 Running 2 (17m ago) 2d6h

kube-scheduler-master2 1/1 Running 2 (16m ago) 2d6h

kube-scheduler-master3 1/1 Running 2 (16m ago) 2d6h

nginx-ingress-controller-6dntr 1/1 Running 0 28s

nginx-ingress-controller-q69fd 1/1 Running 0 33s

[root@master1 ~]#五、查看pod节点信息

[root@master1 ~]# kubectl -n kube-system get pod -o wide 查看pod节点信息

n是名字 查看命名空间kube-system pod的详细信息(不显示aliyun-ingress的信息)

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

calico-kube-controllers-7dc5458bc6-c9pdn 1/1 Running 2 (98s ago) 2d5h 10.244.136.7

nginx-ingress-controller-6dntr 1/1 Running 1 (92s ago) 5m12s 192.168.7.184 node2 <none> <none>

nginx-ingress-controller-q69fd 1/1 Running 1 (94s ago) 5m17s 192.168.7.183 node1 <none> <none>

[root@master1 ~]#

六、再开一台拉取并下载镜像:

[root@Server10 ~]# systemctl status docker

● docker.service - Docker Application Container Engine

Loaded: loaded (/usr/lib/systemd/system/docker.service; enabled; preset: disabled)

Active: active (running) since Thu 2025-06-26 15:10:32 CST; 1min 4s ago

TriggeredBy: ● docker.socket

Docs: https://docs.docker.com

Main PID: 1131 (dockerd)

Tasks: 9

Memory: 101.3M

CPU: 402ms

CGroup: /system.slice/docker.service

└─1131 /usr/bin/dockerd -H fd:// --containerd=/run/containerd/containerd.sock

6月 26 15:10:32 Server10 dockerd[1131]: time="2025-06-26T15:10:32.862124213+08:00" level=warning msg="Erro>

6月 26 15:10:32 Server10 dockerd[1131]: time="2025-06-26T15:10:32.883357479+08:00" level=info msg="Loading>

6月 26 15:10:32 Server10 dockerd[1131]: time="2025-06-26T15:10:32.893807841+08:00" level=info msg="Docker >

6月 26 15:10:32 Server10 dockerd[1131]: time="2025-06-26T15:10:32.893896107+08:00" level=info msg="Initial>

6月 26 15:10:32 Server10 dockerd[1131]: time="2025-06-26T15:10:32.896529767+08:00" level=warning msg="CDI >

6月 26 15:10:32 Server10 dockerd[1131]: time="2025-06-26T15:10:32.896557927+08:00" level=warning msg="CDI >

6月 26 15:10:32 Server10 dockerd[1131]: time="2025-06-26T15:10:32.912830212+08:00" level=info msg="Complet>

6月 26 15:10:32 Server10 dockerd[1131]: time="2025-06-26T15:10:32.923614845+08:00" level=info msg="Daemon >

6月 26 15:10:32 Server10 dockerd[1131]: time="2025-06-26T15:10:32.923763584+08:00" level=info msg="API lis>

6月 26 15:10:32 Server10 systemd[1]: Started Docker Application Container Engine.

[root@Server10 ~]#

[root@Server10 ~]# docker pull busybox:1.29.2[root@Server10 ~]# docker images

REPOSITORY TAG IMAGE ID CREATED SIZE

nginx 1.26.0 94543a6c1aef 13 months ago 188MB

busybox 1.29.2 e1ddd7948a1c 6 years ago 1.16MB

[root@Server10 ~]#

[root@Server10 ~]# docker save busybox.1.29.2.tar busybox:1.29.2

cowardly refusing to save to a terminal. Use the -o flag or redirect

[root@Server10 ~]# docker save -o busybox.1.29.2.tar busybox:1.29.2 busybox.1.29.2.tar 备份的 busybox:1.29.2镜像源

[root@Server10 ~]# ls

anaconda-ks.cfg busybox.1.29.2.tar

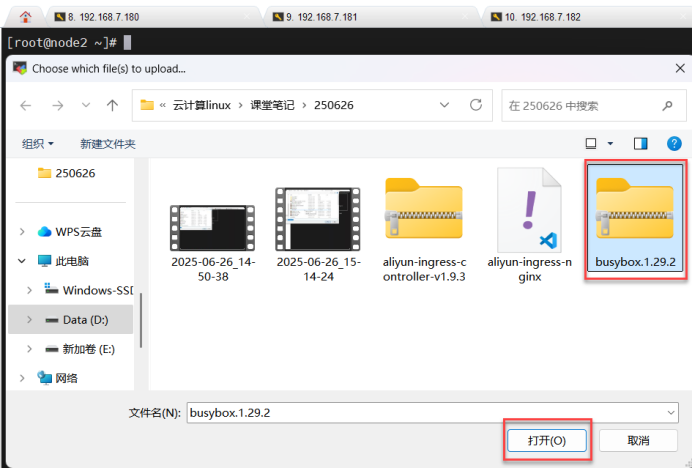

[root@Server10 ~]# 七、将下载到本地的镜像上传到7.183、7.184 node1\node2上

[root@node1 ~]# ls

aliyun-ingress-controller-v1.9.3.tar anaconda-ks.cfg busybox-1-28.tar.gz busybox.1.29.2.tar calico.tar.gz nginx-1.21.6.tar

[root@node1 ~]#[root@node2 ~]# ls

aliyun-ingress-controller-v1.9.3.tar anaconda-ks.cfg busybox-1-28.tar.gz busybox.1.29.2.tar calico.tar.gz nginx-1.21.6.tar

[root@node2 ~]#八、node1\2节点都导入busybox镜像

[root@node1 ~]# ctr -n k8s.io images import busybox-1-28.tar.gz node1\2节点都导入busybox镜像

unpacking docker.io/library/busybox:1.28 (sha256:585093da3a716161ec2b2595011051a90d2f089bc2a25b4a34a18e2cf542527c)...done

[root@node1 ~]#[root@node2 ~]# ctr -n k8s.io images import busybox-1-28.tar.gz

unpacking docker.io/library/busybox:1.28 (sha256:585093da3a716161ec2b2595011051a90d2f089bc2a25b4a34a18e2cf542527c)...done

[root@node2 ~]#[root@node1 ~]# ctr -n k8s.io images import nginx-1.21.6.tar node1导入nginx-1.21.6镜像

unpacking docker.io/library/nginx:1.21.6 (sha256:94b808e393739b5363decf631a746d0241083d40eb05f07200a6d1c0c16f54b8)...done

[root@node1 ~]#[root@node2 ~]# ctr -n k8s.io images import nginx-1.21.6.tar node2导入nginx-1.21.6镜像

unpacking docker.io/library/nginx:1.21.6 (sha256:94b808e393739b5363decf631a746d0241083d40eb05f07200a6d1c0c16f54b8)...done

[root@node2 ~]#九、创建nginx的配置文件

[root@master1 ~]# vim nginx.yaml 创建nginx的配置文件

[root@master1 ~]#

---

kind: Service 类型service

apiVersion: v1 api版本为1

metadata: 源数据名称为:new-nginx

name: new-nginx

spec: 资源对象的规格定义

selector: 调度器

app: new-nginx app叫new-nginx

ports: 定义端口

- name: http-port 网站的pod

port: 80 端口80

protocol: TCP 协议TCP

targetPort: 80 目标端口80

---

# 新版本k8s的ingress配置

apiVersion: networking.k8s.io/v1 api版本networking.k8s.io/v1

kind: Ingress 类型:Ingress

metadata: 源数据

name: new-nginx 名称为:new-nginx

annotations: 注释

kubernetes.io/ingress.class: "nginx" k8singress的类nginx

nginx.ingress.kubernetes.io/force-ssl-redirect: "true" 强制ssl重定向:必须https

nginx.ingress.kubernetes.io/whitelist-source-range: 0.0.0.0/0 定义白名单为任何IP

nginx.ingress.kubernetes.io/configuration-snippet: | 配置片段:

if ($host != 'www.benet.com' ) { 如果输入的域名是www.benet.com

rewrite ^ https://www.benet.com$request_uri permanent;

#自动重定向为:https://www.benet.com

}

spec: 资源对象的规格定义

rules: 规格

- host: benet.com 主机:benet.com 主机名:benet.com一种情况

http: 超文本链接协议

paths: 路径

- backend: 后台

service: 服务

name: new-nginx

port: 端口:80

number: 80

path: / 根下

pathType: Prefix 前缀

- host: m.benet.com 主机名:m.benet.com二种情况

http:

paths:

- backend:

service:

name: new-nginx

port:

number: 80

path: /

pathType: Prefix

- host: www.benet.com 主机名:第三种定义域名的格式

http:

paths:

- backend:

service:

name: new-nginx

port:

number: 80

path: /

pathType: Prefix

tls: 三种域名都是以https

- hosts:

- benet.com

- m.benet.com

- www.benet.com

secretName: benet-com-tls

# tls secret create command:

# kubectl -n <namespace> create secret tls boge-com-tls --key boge-com.key --cert boge-com.csr

---

apiVersion: apps/v1 api的版本类型为无状态服务

kind: Deployment

metadata:

name: new-nginx 元数据为new-nginx

labels: 卷标

app: new-nginx

spec: 资源定义对象:

replicas: 3 # 数量可以根据NODE节点数量来定义

selector: 调度器

matchLabels:

app: new-nginx 关联卷标为new-nginx

template: 模板

metadata: 元数据

labels: 卷标:new-nginx

app: new-nginx

spec:

containers: 资源定义对象:容器

#--------------------------------------------------

- name: new-nginx

image: nginx:1.21.6 镜像:nginx:1.21.6

env: 定义环境变量

- name: TZ 时区

value: Asia/Shanghai 亚洲上海

ports:

- containerPort: 80 容器的端口80

volumeMounts: 存储挂载

- name: html-files 名称:html-files

mountPath: "/usr/share/nginx/html" 挂载路径:/usr/share/nginx/html

#--------------------------------------------------

- name: busybox

image: registry.cn-hangzhou.aliyuncs.com/acs/busybox:v1.29.2

args:

- /bin/sh

- -c

- >

while :; do

if [ -f /html/index.html ];then

echo "[$(date +%F\ %T)] ${MY_POD_NAMESPACE}-${MY_POD_NAME}-${MY_POD_IP}" > /html/index.html

sleep 1

else

touch /html/index.html

fi

done

env:

- name: TZ

value: Asia/Shanghai

- name: MY_POD_NAME

valueFrom:

fieldRef:

apiVersion: v1

fieldPath: metadata.name

- name: MY_POD_NAMESPACE

valueFrom:

fieldRef:

apiVersion: v1

fieldPath: metadata.namespace

- name: MY_POD_IP

valueFrom:

fieldRef:

apiVersion: v1

fieldPath: status.podIP

volumeMounts:

- name: html-files

mountPath: "/html"

- mountPath: /etc/localtime

name: tz-config

#--------------------------------------------------

volumes:

- name: html-files

emptyDir:

medium: Memory

sizeLimit: 10Mi

- name: tz-config

hostPath:

path: /usr/share/zoneinfo/Asia/Shanghai

---十、应用nginx的配置文件

[root@master1 ~]# kubectl apply -f nginx.yaml 应用nginx的配置文件

service/new-nginx created

Warning: annotation "kubernetes.io/ingress.class" is deprecated, please use 'spec.ingressClassName' instead

ingress.networking.k8s.io/new-nginx created

deployment.apps/new-nginx created

[root@master1 ~]# kubectl get ingress 查看ingress

NAME CLASS HOSTS ADDRESS PORTS AGE

new-nginx <none> benet.com,m.benet.com,www.benet.com 在配置文件定义好的 80, 443 14s

自建https证书

[root@master1 ~]#十一、生成证书benet.key

[root@master1 ~]# openssl genrsa -out benet.key 2048 生成证书benet.key

[root@master1 ~]#[root@master1 ~]# openssl req -new -x509 -key benet.key -out benet.csr -days 3650 -subj /CN=*.benet.com

根据私钥benet.key,生成泛域名证书(三个证书,m.benet.com,www.benet.com,m.benet.com)

新增的三个域名证书都可以使用这个证书

[root@master1 ~]#

[root@master1 ~]# ll 查看 文件及目录

total 953800

-rw-r--r-- 1 root root 139462656 Jun 26 14:53 aliyun-ingress-controller-v1.9.3.tar

-rw-r--r-- 1 root root 19309 Jun 26 14:57 aliyun-ingress-nginx.yaml

-rw-------. 1 root root 1413 Feb 27 15:06 anaconda-ks.cfg

-rw-r--r-- 1 root root 1119 Jun 26 16:00 benet.csr 证书

-rw------- 1 root root 1704 Jun 26 15:57 benet.key 密钥

-rw-r--r-- 1 root root 548179968 Jun 24 09:37 calico.tar.gz

-rw-r--r-- 1 root root 244818 Jun 24 10:03 calico.yaml

-rw-r--r-- 1 root root 5444 Jun 24 08:25 kubeadm-init.log

-rw-r--r-- 1 root root 1106 Jun 24 08:24 kubeadm.yaml

-rw-r--r-- 1 root root 288751104 Jun 26 14:45 nginx-ingress-controller_v1.11.2.tar

-rw-r--r-- 1 root root 3644 Jun 26 15:55 nginx.yaml

[root@master1 ~]#十二、查看new-nginx配置文件的内容

[root@master1 ~]# kubectl get ingress new-nginx -o yaml 查看new-nginx配置文件的内容(查看生成文件创建的过程)

nginx.ingress.kubernetes.io/configuration-snippet: |

if ($host != 'www.benet.com' ) {

rewrite ^ https://www.benet.com$request_uri permanent;

ingress:

- ip: 10.111.132.159

[root@master1 ~]#

tls:

- hosts:

- benet.com 看这个

- m.benet.com

- www.benet.com

secretName: benet-com-tls

status:

loadBalancer: 负载均衡

ingress: 负载均衡使用ingress(可以做四层网络层和七层应用层负载)

- ip: 10.101.102.78 有IP

[root@master1 ~]#[root@master1 ~]# kubectl get ingress

NAME CLASS HOSTS ADDRESS PORTS AG E

new-nginx <none> benet.com,m.benet.com,www.benet.com 10.111.132.159 80, 443 16 m

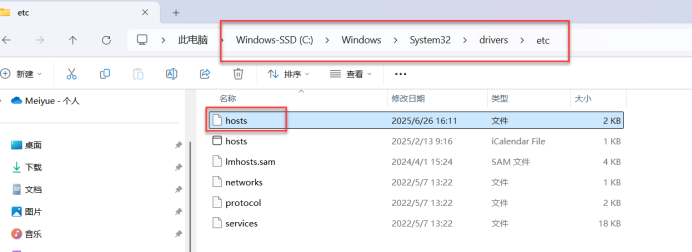

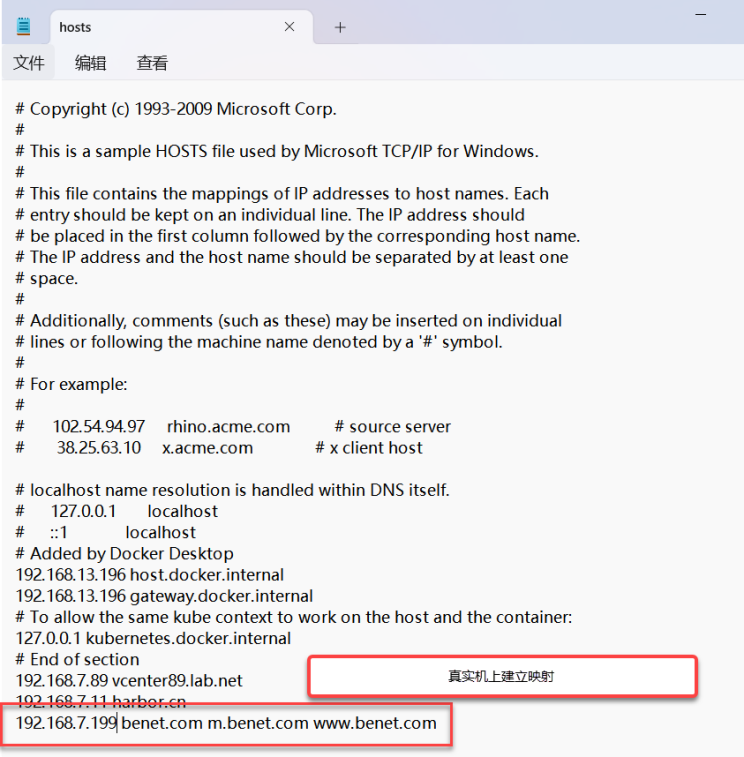

[root@master1 ~]#十三、建立映射:

十四、测试验证: