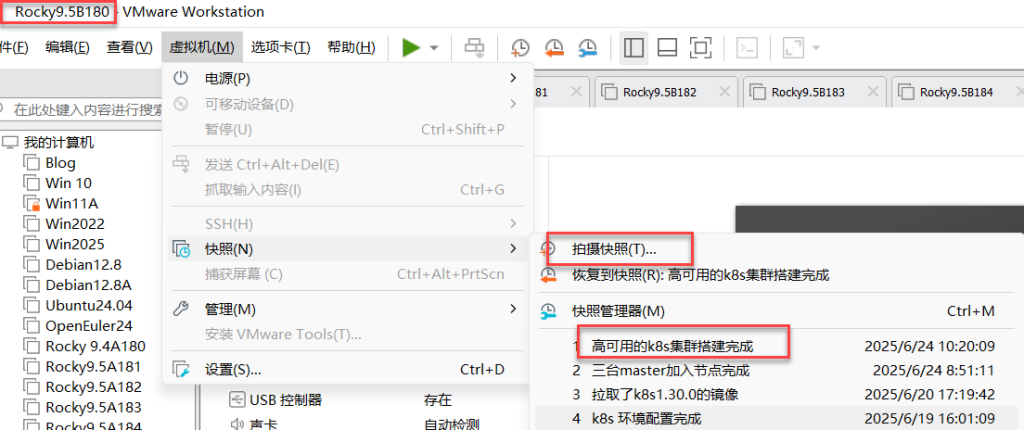

一、实验环境:

五台都还原至k8s环境配置完成

二、5台机器上创建k8s版本v1.30阿里云的数据源

[root@master1 -5~]# cat > /etc/yum.repos.d/kubernetes.repo <<EOF 所有节点上创建k8s版本v1.30阿里云的数据源

[kubernetes]

name=Kubernetes

baseurl=https://mirrors.aliyun.com/kubernetes-new/core/stable/v1.30/rpm/

enabled=1

gpgcheck=1

gpgkey=https://mirrors.aliyun.com/kubernetes-new/core/stable/v1.30/rpm/repodata/repomd.xml.key

EOF

[root@master1 ~]# dnf clean all 清空缓存

32 个文件已删除

[root@master1 ~]# dnf makecache 重新生成yum缓存

Docker CE Stable - x86_64 160 kB/s | 73 kB 00:00

Kubernetes 82 kB/s | 35 kB 00:00

Rocky Linux 9 - BaseOS 708 kB/s | 2.5 MB 00:03

Rocky Linux 9 - AppStream 3.5 MB/s | 9.6 MB 00:02

Rocky Linux 9 - Extras 12 kB/s | 16 kB 00:01

元数据缓存已建立。

[root@master1 ~]#三、master1\master2安装nginx\keepalived和nginx的数据流

[root@master1 ~]# dnf -y install nginx keepalived nginx-mod-stream master1\master2安装nginx\keepalived和nginx的数据流

nginx做master节点的负载均衡,keepalived做master节点的高可用,

负载均衡的另一种方法:nginx+keepalived

(做负载均衡的另一种方法:haproxy+keepalived)

[root@master2 ~]# dnf -y install nginx keepalived nginx-mod-stream 四、master1、master2节点上备份及编辑nginx的配置文件

[root@master1 ~]# mv /etc/nginx/nginx.conf /etc/nginx/nginx.conf.bak 备份nginx的配置文件

[root@master1 ~]# vim /etc/nginx/nginx.conf 编辑nginx的配置文件

user nginx;

worker_processes auto;

error_log /var/log/nginx/error.log;

pid /run/nginx.pid;

include /usr/share/nginx/modules/*.conf;

events {

worker_connections 1024;

}

#四层负载均衡,为两台 Master apiserver 组件提供负载均衡

stream {

log_format main '$remote_addr $upstream_addr - [$time_local] $status

$upstream_bytes_sent';

access_log /var/log/nginx/k8s-access.log main;

upstream k8s-apiserver {

server 192.168.7.180:6443 weight=5 max_fails=3 fail_timeout=30s;

server 192.168.7.181:6443 weight=5 max_fails=3 fail_timeout=30s;

server 192.168.7.182:6443 weight=5 max_fails=3 fail_timeout=30s;

}

server {

listen 16443; # 由于 nginx 与 master 节点复用,这个监听端口不能是 6443,否则会冲突

proxy_pass k8s-apiserver;

}

}

http {

log_format main '$remote_addr - $remote_user [$time_local] "$request" '

'$status $body_bytes_sent "$http_referer" '

'"$http_user_agent" "$http_x_forwarded_for"';

access_log /var/log/nginx/access.log main;

sendfile on;

tcp_nopush on;

tcp_nodelay on;

keepalive_timeout 65;

types_hash_max_size 2048;

include /etc/nginx/mime.types;

default_type application/octet-stream;

server {

listen 80 default_server;

server_name _;

location / {

}

}

}[root@master2 ~]# vim /etc/nginx/nginx.confuser nginx;

worker_processes auto;

error_log /var/log/nginx/error.log;

pid /run/nginx.pid;

include /usr/share/nginx/modules/*.conf;

events {

worker_connections 1024;

}

#四层负载均衡,为两台 Master apiserver 组件提供负载均衡

stream {

log_format main '$remote_addr $upstream_addr - [$time_local] $status

$upstream_bytes_sent';

access_log /var/log/nginx/k8s-access.log main;

upstream k8s-apiserver {

server 192.168.7.180:6443 weight=5 max_fails=3 fail_timeout=30s;

server 192.168.7.181:6443 weight=5 max_fails=3 fail_timeout=30s;

server 192.168.7.182:6443 weight=5 max_fails=3 fail_timeout=30s;

}

server {

listen 16443; # 由于 nginx 与 master 节点复用,这个监听端口不能是 6443,否则会冲突

proxy_pass k8s-apiserver;

}

}

http {

log_format main '$remote_addr - $remote_user [$time_local] "$request" '

'$status $body_bytes_sent "$http_referer" '

'"$http_user_agent" "$http_x_forwarded_for"';

access_log /var/log/nginx/access.log main;

sendfile on;

tcp_nopush on;

tcp_nodelay on;

keepalive_timeout 65;

types_hash_max_size 2048;

include /etc/nginx/mime.types;

default_type application/octet-stream;

server {

listen 80 default_server;

server_name _;

location / {

}

}

}五、master1上编辑keepalived的配置文件

[root@master1 ~]# vim /etc/keepalived/keepalived.conf 编辑keepalived的配置文件

! Configuration File for keepalived

global_defs {

notification_email {

1319276778@qq.com

}

router_id LVS_DEVEL

}

vrrp_script check_nginx { 定义检查nginx脚本并指定位置

script "etc/keepalived/check_nginx.sh"

}

vrrp_instance VI_1 {

state MASTER 状态是主

interface ens160 网卡ens160

virtual_router_id 51

priority 100 优先级100

advert_int 1

authentication {

auth_type PASS

auth_pass 1111

}

virtual_ipaddress {

192.168.7.199

}

}

[root@master1 ~]# 六、将master1节点上的keepalived的配置文件远程复制给master2及编辑master2的配置文件

[root@master1 ~]# scp /etc/keepalived/keepalived.conf 192.168.7.181:/etc/keepalived/keepalived.conf

将master1节点上的keepalived的配置文件远程复制给master2

The authenticity of host '192.168.7.181 (192.168.7.181)' can't be established.

ED25519 key fingerprint is SHA256:xUDA0O+t2CzJjkoXTnPz4uWZHLsBka7X2jWeyssMSNo.

This host key is known by the following other names/addresses:

~/.ssh/known_hosts:4: master2

Are you sure you want to continue connecting (yes/no/[fingerprint])? yes

Warning: Permanently added '192.168.7.181' (ED25519) to the list of known hosts.

keepalived.conf 100% 441 1.2MB/s 00:00 [root@master2 ~]# vim /etc/keepalived/keepalived.conf

! Configuration File for keepalived

global_defs {

notification_email {

1319276778@qq.com

}

router_id LVS_DEVEL

}

vrrp_script check_nginx {

script "etc/keepalived/check_nginx.sh"

}

vrrp_instance VI_1 {

state BACKUP 状态是备份

interface ens160

virtual_router_id 51

priority 90 优先级90

advert_int 1

authentication {

auth_type PASS

auth_pass 1111

}

virtual_ipaddress {

192.168.7.199

}

}七、master1上创建检查nginx的shell脚本

[root@master1 ~]# vim /etc/keepalived/check_nginx.sh 创建检查nginx的shell脚本(批处理文件的脚本文件:.bat是windows下的脚本文件,主要用于计划任务,用于备份)#!/bin/bash

counter=$(ps -ef |grep nginx | grep sbin |egrep -cv "grep|$$" )

if [ $counter -eq 0 ]; then

service nginx start

sleep 2

counter=$(ps -ef |grep nginx | grep sbin |egrep -cv "grep|$$" )

if [ $counter -eq 0 ]; then

service keepalived stop

fi

fi八、将master1的nginx的shell脚本远程复制给master2

[root@master1 ~]# scp /etc/keepalived/check_nginx.sh 192.168.7.181:/etc/keepalived/ 将master1的nginx的shell脚本远程复制给master2

check_nginx.sh 100% 252 755.3KB/s 00:00

[root@master1 ~]# 九、master1上给nginx的shell脚本添加执行权限、重启nginx和keepalived

[root@master1 ~]# chmod +x /etc/keepalived/check_nginx.sh 给nginx的shell脚本添加执行权限

[root@master1 ~]# systemctl daemon-reload 重新加载系统进程

[root@master1 ~]# systemctl start nginx 启动nginx

[root@master1 ~]# systemctl enable nginx 设置nginx开机启动

[root@master1 ~]# systemctl start keepalived 启动keepalived

[root@master1 ~]# systemctl enable keepalived 设置keepalived开机启动

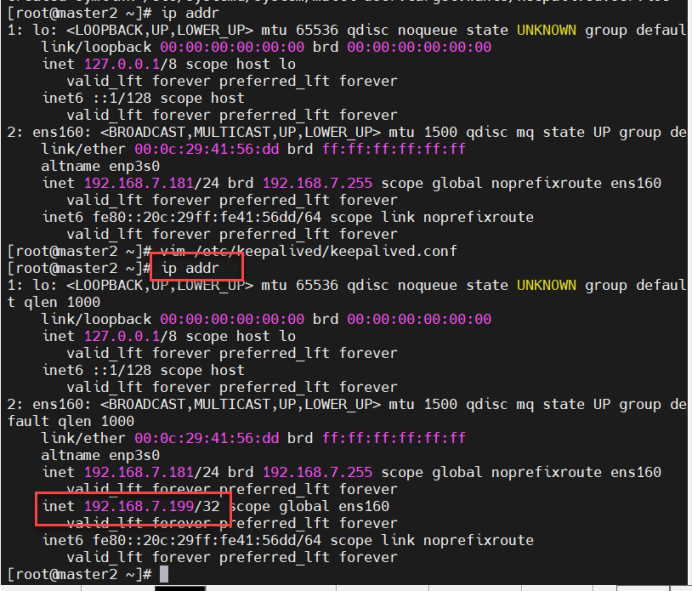

Created symlink /etc/systemd/system/multi-user.target.wants/keepalived.service → /usr/lib/systemd/system/keepalived.service.十、查看IP地址

[root@master1 ~]# ip addr 查看IP地址

1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN group default qlen 1000

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

valid_lft forever preferred_lft forever

inet6 ::1/128 scope host

valid_lft forever preferred_lft forever

2: ens160: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc mq state UP group default qlen 1000

link/ether 00:0c:29:36:46:9a brd ff:ff:ff:ff:ff:ff

altname enp3s0

inet 192.168.7.180/24 brd 192.168.7.255 scope global noprefixroute ens160

valid_lft forever preferred_lft forever

inet 192.168.7.199/32 scope global ens160

valid_lft forever preferred_lft forever

inet6 fe80::20c:29ff:fe36:469a/64 scope link noprefixroute

valid_lft forever preferred_lft forever十一、master2上给nginx的shell脚本添加执行权限、重启nginx和keepalived

[root@master2 ~]# chmod +x /etc/keepalived/check_nginx.sh 给nginx的shell脚本添加执行权限

[root@master2 ~]# systemctl daemon-reload 重新加载系统进程

[root@master2 ~]# systemctl start nginx 启动nginx

[root@master2 ~]# systemctl enable nginx 设置nginx开机启动

Created symlink /etc/systemd/system/multi-user.target.wants/nginx.service → /usr/lib/systemd/system/nginx.service.

[root@master2 ~]# systemctl start keepalived 启动keepalived

[root@master2 ~]# systemctl enable keepalived 设置keepalived开机启动

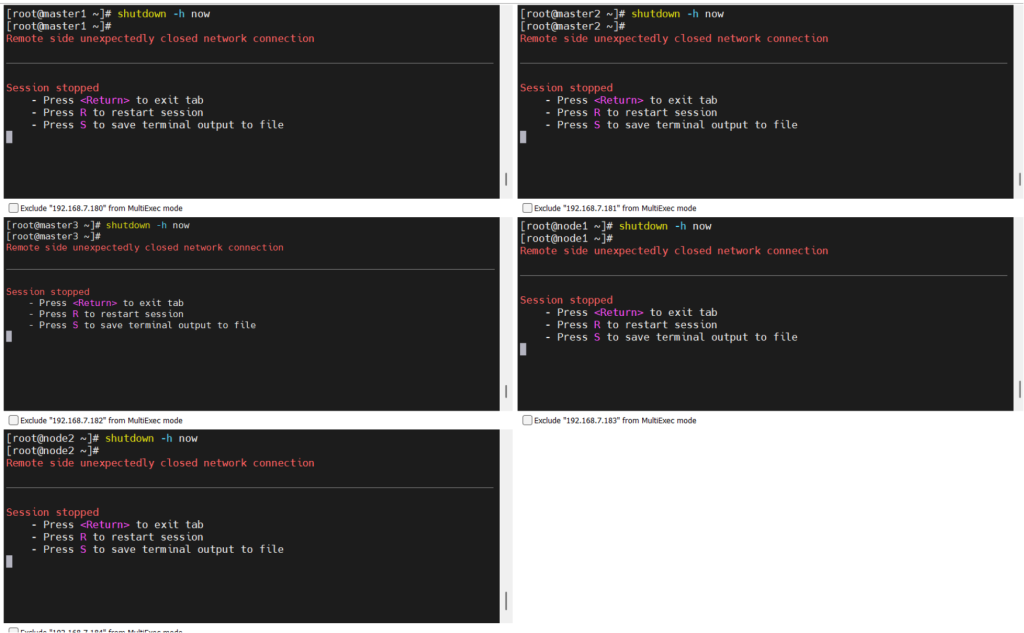

Created symlink /etc/systemd/system/multi-user.target.wants/keepalived.service → /usr/lib/systemd/system/keepalived.service.十二、Master1主节点关机,模拟master节点发生故障;

如果使用master1节点IP:7.180,主节点1坏了,集群整个就访问不了。但如果使用主备集群IP:7.199,如果主节点1坏了,自动切换至备用主节点master2上,为防止单点故障;

十三、(所有节点)安装k8s软件

[root@1-5 ~]# yum install -y kubelet-1.30.0 kubeadm-1.30.0 kubectl-1.30.0 (所有节点)安装k8s软件[root@1-5 ~]# systemctl enable kubelet 设置kubelet开机启动

Created symlink /etc/systemd/system/multi-user.target.wants/kubelet.service → /usr/lib/systemd/system/kubelet.service.

[root@1-5 ~]# systemctl start kubelet 启动kubelet

[root@1-5~]#十四、(所有节点master1、2、3)拉取k8s1.30.0的镜像

[root@master1 ~]# kubeadm config images pull \

--image-repository registry.aliyuncs.com/google_containers \

--kubernetes-version v1.30.0 (所有节点)拉取k8s1.30.0的镜像

[config/images] Pulled registry.aliyuncs.com/google_containers/kube-apiserver:v1.30.0

[config/images] Pulled registry.aliyuncs.com/google_containers/kube-controller-manager:v1.30.0

[config/images] Pulled registry.aliyuncs.com/google_containers/kube-scheduler:v1.30.0

[config/images] Pulled registry.aliyuncs.com/google_containers/kube-proxy:v1.30.0

[config/images] Pulled registry.aliyuncs.com/google_containers/coredns:v1.11.1

[config/images] Pulled registry.aliyuncs.com/google_containers/pause:3.9

[config/images] Pulled registry.aliyuncs.com/google_containers/etcd:3.5.12-0

[root@master1 ~]#

[root@master1 ~]# [root@master2 ~]# kubeadm config images pull \

--image-repository registry.aliyuncs.com/google_containers \

--kubernetes-version v1.30.0

[config/images] Pulled registry.aliyuncs.com/google_containers/kube-apiserver:v1.30.0

[config/images] Pulled registry.aliyuncs.com/google_containers/kube-controller-manager:v1.30.0

[config/images] Pulled registry.aliyuncs.com/google_containers/kube-scheduler:v1.30.0

[config/images] Pulled registry.aliyuncs.com/google_containers/kube-proxy:v1.30.0

[config/images] Pulled registry.aliyuncs.com/google_containers/coredns:v1.11.1

[config/images] Pulled registry.aliyuncs.com/google_containers/pause:3.9

[config/images] Pulled registry.aliyuncs.com/google_containers/etcd:3.5.12-0

[root@master2 ~]#[root@master3 ~]# kubeadm config images pull \

--image-repository registry.aliyuncs.com/google_containers \

--kubernetes-version v1.30.0

[config/images] Pulled registry.aliyuncs.com/google_containers/kube-apiserver:v1.30.0

[config/images] Pulled registry.aliyuncs.com/google_containers/kube-controller-manager:v1.30.0

[config/images] Pulled registry.aliyuncs.com/google_containers/kube-scheduler:v1.30.0

[config/images] Pulled registry.aliyuncs.com/google_containers/kube-proxy:v1.30.0

[config/images] Pulled registry.aliyuncs.com/google_containers/coredns:v1.11.1

[config/images] Pulled registry.aliyuncs.com/google_containers/pause:3.9

[config/images] Pulled registry.aliyuncs.com/google_containers/etcd:3.5.12-0

[root@master3 ~]# 十五、master1上初始生成k8s管理的配置文件

[root@master1 ~]# kubeadm config print init-defaults > kubeadm.yaml 初始生成k8s管理的配置文件

[root@master1 ~]# vim kubeadm.yaml

apiVersion: kubeadm.k8s.io/v1beta3

bootstrapTokens:

- groups:

- system:bootstrappers:kubeadm:default-node-token

token: abcdef.0123456789abcdef

ttl: 24h0m0s

usages:

- signing

- authentication

kind: InitConfiguration

#localAPIEndpoint: 添加注释(做多节点,而不是单节点的集群高可用,单节点不需要注释)

# advertiseAddress: 1.2.3.4 添加注释(做多节点的集群高可用)

# bindPort: 6443

nodeRegistration:

criSocket: unix:///run/containerd/containerd.sock

imagePullPolicy: IfNotPresent

# name: node

taints: null

---

apiServer:

timeoutForControlPlane: 4m0s

apiVersion: kubeadm.k8s.io/v1beta3

certificatesDir: /etc/kubernetes/pki

clusterName: kubernetes

controllerManager: {}

dns: {}

etcd:

local:

dataDir: /var/lib/etcd

imageRepository: registry.cn-hangzhou.aliyuncs.com/google_containers 指向k8s在阿里云的镜像源

kind: ClusterConfiguration

kubernetesVersion: 1.30.0

controlPlaneEndpoint: 192.168.7.199:16443 添加控制节点VIP地址(:16443 端口号,为防止冲突)

networking:

dnsDomain: cluster.local

podSubnet: 10.244.0.0/16 添加的网段:容器里pod的网段,10网段为防止和192网段冲突

serviceSubnet: 10.96.0.0/12

scheduler: {}

---

apiVersion: kubeproxy.config.k8s.io/v1alpha1 让k8s支持IPvs

kind: KubeProxyConfiguration

mode: ipvs

---

apiVersion: kubelet.config.k8s.io/v1beta1 让系统类型支持k8s

kind: KubeletConfiguration

cgroupDriver: systemd十六、使用k8s管理配置文件生成k8s集群,并显示日志信息

[root@master1 ~]# kubeadm init --config=kubeadm.yaml | tee kubeadm-init.log使用k8s管理配置文件生成k8s集群,并显示日志信息将以下的日志信息复制到记事本上:

Your Kubernetes control-plane has initialized successfully!

To start using your cluster, you need to run the following as a regular user:

mkdir -p $HOME/.kube

sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

sudo chown $(id -u):$(id -g) $HOME/.kube/config

Alternatively, if you are the root user, you can run:

export KUBECONFIG=/etc/kubernetes/admin.conf

You should now deploy a pod network to the cluster.

Run "kubectl apply -f [podnetwork].yaml" with one of the options listed at:

https://kubernetes.io/docs/concepts/cluster-administration/addons/

You can now join any number of control-plane nodes by copying certificate authorities

and service account keys on each node and then running the following as root:

kubeadm join 192.168.7.199:16443 --token abcdef.0123456789abcdef \

--discovery-token-ca-cert-hash sha256:dddca18cca4121bfecb147f8171110b59a795bcccb51ccdca721a5d14a8eb634 \

--control-plane master2\master3加入集群的命令

Then you can join any number of worker nodes by running the following on each as root:

kubeadm join 192.168.7.199:16443 --token abcdef.0123456789abcdef \ 工作节点加入集群的命令

--discovery-token-ca-cert-hash sha256:dddca18cca4121bfecb147f8171110b59a795bcccb51ccdca721a5d14a8eb634

[root@master1 ~]#十七、复制日志信息到master1:

[root@master1 ~]# mkdir -p $HOME/.kube 创建隐藏目录

[root@master1 ~]# sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config 将k8s的管理配置文件复制到隐藏文件下

[root@master1 ~]# sudo chown $(id -u):$(id -g) $HOME/.kube/config 更改目录的用户核所属组

[root@master1 ~]# 十八、创建k8s的证书和密钥的目录

[root@master2 ~]# cd /root && mkdir -p /etc/kubernetes/pki/etcd &&mkdir -p ~/.kube/ 创建k8s的证书和密钥的目录

[root@master2 ~]# 十九、将k8s证书和密钥远程复制到master2节点上

[root@master1 ~]# scp /etc/kubernetes/pki/ca.crt master2:/etc/kubernetes/pki/ 8行:将k8s证书和密钥远程复制到master2节点上(工作节点3个够用,node节点50个打底,其中node节点8核cpu,32G内存)

scp /etc/kubernetes/pki/ca.key master2:/etc/kubernetes/pki/

scp /etc/kubernetes/pki/sa.key master2:/etc/kubernetes/pki/

scp /etc/kubernetes/pki/sa.pub master2:/etc/kubernetes/pki/

scp /etc/kubernetes/pki/front-proxy-ca.crt master2:/etc/kubernetes/pki/

scp /etc/kubernetes/pki/front-proxy-ca.key master2:/etc/kubernetes/pki/

scp /etc/kubernetes/pki/etcd/ca.crt master2:/etc/kubernetes/pki/etcd/

scp /etc/kubernetes/pki/etcd/ca.key master2:/etc/kubernetes/pki/etcd/

#8行:将k8s证书和密钥远程复制到master2节点上(工作节点3个够用,node节点50个打底,其中node节点8核cpu,32G内存)

ca.crt 100% 1107 2.4MB/s 00:00

ca.key 100% 1679 3.0MB/s 00:00

sa.key 100% 1679 3.9MB/s 00:00

sa.pub 100% 451 1.1MB/s 00:00

front-proxy-ca.crt 100% 1123 2.5MB/s 00:00

front-proxy-ca.key 100% 1679 3.8MB/s 00:00

ca.crt 100% 1094 2.7MB/s 00:00

ca.key 100% 1679 3.8MB/s 00:00

[root@master1 ~]# 二十、master2加入集群

[root@master2 ~]# kubeadm join 192.168.7.199:16443 --token abcdef.0123456789ab cdef \

--discovery-token-ca-cert-hash sha256:dddca18cca4121bfecb147f8171110b59a 795bcccb51ccdca721a5d14a8eb634 \

--control-plane master2加入集群

[preflight] Running pre-flight checks

[preflight] Reading configuration from the cluster...

[preflight] FYI: You can look at this config file with 'kubectl -n kube-system g et cm kubeadm-config -o yaml'二十一、复制日志信息到master2

[root@master2 ~]# mkdir -p $HOME/.kube 创建隐藏目录

[root@master2 ~]# sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config 将k8s的管理配置文件复制到隐藏文件下

[root@master2 ~]# sudo chown $(id -u):$(id -g) $HOME/.kube/config 更改目录的用户核所属组

[root@master2 ~]#[root@master1 ~]# kubectl get nodes 查看节点信息

NAME STATUS ROLES AGE VERSION

master1 NotReady control-plane 9h v1.30.0

master2 NotReady control-plane 6m51s v1.30.0 控制节点master2

[root@master1 ~]#二十二、将master3加入集群中

[root@master3 ~]# cd /root && mkdir -p /etc/kubernetes/pki/etcd &&mkdir -p ~/.kube/ 将master3加入集群中

[root@master3 ~]#二十三、将k8s证书和密钥远程复制到master3节点上

[root@master1 ~]# scp /etc/kubernetes/pki/ca.crt master3:/etc/kubernetes/pki/

scp /etc/kubernetes/pki/ca.key master3:/etc/kubernetes/pki/

scp /etc/kubernetes/pki/sa.key master3:/etc/kubernetes/pki/

scp /etc/kubernetes/pki/sa.pub master3:/etc/kubernetes/pki/

scp /etc/kubernetes/pki/front-proxy-ca.crt master3:/etc/kubernetes/pki/

scp /etc/kubernetes/pki/front-proxy-ca.key master3:/etc/kubernetes/pki/

scp /etc/kubernetes/pki/etcd/ca.crt master3:/etc/kubernetes/pki/etcd/

scp /etc/kubernetes/pki/etcd/ca.key master3:/etc/kubernetes/pki/etcd/

8行:将k8s证书和密钥远程复制到master3节点上

ca.crt 100% 1107 3.1MB/s 00:00二十四、master1节点加入集群

[root@master1 ~]# kubeadm token create --print-join-command master1节点加入集群

kubeadm join 192.168.7.199:16443 --token aikrya.cvs0m5kqdm0jyfvf --discovery-tok en-ca-cert-hash sha256:dddca18cca4121bfecb147f8171110b59a795bcccb51ccdca721a5d14 a8eb634

[root@master1 ~]#二十五、将master3加入集群中

[root@master3 ~]# kubeadm join 192.168.7.199:16443 --token abcdef.0123456789abcdef \ 将master3加入集群中

--discovery-token-ca-cert-hash sha256:dddca18cca4121bfecb147f8171110b59a795bcccb51ccdca721a5d14a8eb634 \

--control-plane二十六、复制日志信息到master3

[root@master3 ~]# mkdir -p $HOME/.kube 创建隐藏目录

[root@master3 ~]# sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config 将k8s的管理配置文件复制到隐藏文件下

[root@master3 ~]# sudo chown $(id -u):$(id -g) $HOME/.kube/config 更改目录的用户核所属组

[root@master3 ~]#二十七、查看节点信息

[root@master1 ~]# kubectl get nodes 查看节点信息

NAME STATUS ROLES AGE VERSION

master1 NotReady control-plane 18m v1.30.0

master2 NotReady control-plane 15m v1.30.0

master3 NotReady control-plane 86s v1.30.0

[root@master1 ~]#二十八、将k8s证书和密钥远程复制到node1、node2节点上

[root@master1 ~]# scp /etc/kubernetes/pki/ca.crt node1:/etc/kubernetes/pki/

scp /etc/kubernetes/pki/ca.key node1:/etc/kubernetes/pki/

scp /etc/kubernetes/pki/sa.key node1:/etc/kubernetes/pki/

scp /etc/kubernetes/pki/sa.pub node1:/etc/kubernetes/pki/

scp /etc/kubernetes/pki/front-proxy-ca.crt node1:/etc/kubernetes/pki/

scp /etc/kubernetes/pki/front-proxy-ca.key node1:/etc/kubernetes/pki/

scp /etc/kubernetes/pki/etcd/ca.crt node1:/etc/kubernetes/pki/etcd/

scp /etc/kubernetes/pki/etcd/ca.key node1:/etc/kubernetes/pki/etcd/

#8行:将k8s证书和密钥远程复制到node1节点上

ca.crt 100% 1107 2.7MB/s 00:00

ca.key 100% 1675 2.3MB/s 00:00

sa.key 100% 1675 2.2MB/s 00:00

sa.pub 100% 451 1.1MB/s 00:00

front-proxy-ca.crt 100% 1123 2.8MB/s 00:00

front-proxy-ca.key 100% 1675 2.7MB/s 00:00

ca.crt 100% 1094 2.2MB/s 00:00

ca.key[root@master1 ~]# scp /etc/kubernetes/pki/ca.crt node2:/etc/kubernetes/pki/

scp /etc/kubernetes/pki/ca.key node2:/etc/kubernetes/pki/

scp /etc/kubernetes/pki/sa.key node2:/etc/kubernetes/pki/

scp /etc/kubernetes/pki/sa.pub node1:/etc/kubernetes/pki/

scp /etc/kubernetes/pki/front-proxy-ca.crt node2:/etc/kubernetes/pki/

scp /etc/kubernetes/pki/front-proxy-ca.key node2:/etc/kubernetes/pki/

scp /etc/kubernetes/pki/etcd/ca.crt node2:/etc/kubernetes/pki/etcd/

scp /etc/kubernetes/pki/etcd/ca.key node2:/etc/kubernetes/pki/etcd/

#8行:将k8s证书和密钥远程复制到node1节点上

ca.crt 100% 1107 2.7MB/s 00:00

ca.key 100% 1675 4.3MB/s 00:00

sa.key 100% 1675 3.3MB/s 00:00

sa.pub 100% 451 761.7KB/s 00:00

front-proxy-ca.crt 100% 1123 3.1MB/s 00:00

front-proxy-ca.key 100% 1675 3.4MB/s 00:00

ca.crt 100% 1094 2.8MB/s 00:00

ca.key 100% 1679 3.6MB/s 00:00

[root@master1 ~]#二十九、node1、node2上创建证书密钥的目录

[root@node1 ~]# mkdir -p /etc/kubernetes/pki/etcd node1上创建证书密钥的目录

[root@node1 ~]#[root@node2 ~]# mkdir -p /etc/kubernetes/pki/etcd node2上创建证书密钥的目录

[root@node2 ~]#三十、如果出错,要把证书删掉

[root@node1 ~]# cd /etc/kubernetes/pki

[root@node1 pki]#

[root@node1 pki]# rm ca.crt 如果出错,要把证书删掉

rm: remove regular file 'ca.crt'? y

[root@node1 pki]# cd三十一、将node1加入到集群中,忽略警告信息

[root@node1 ~]# kubeadm join 192.168.7.199:16443 --token abcdef.0123456789abcdef --discovery-token-ca-cert-hash sha256:c5ee15c87f3a826b7a411bd21009b9f43b48b3ea8443196888482e5eca0d5e30 --ignore-preflight-errors=SystemVerification #将node1加入到集群中,忽略警告信息

[preflight] Running pre-flight checks

[preflight] Reading configuration from the cluster...

[preflight] FYI: You can look at this config file with 'kubectl -n kube-system get cm kubeadm-config -o yaml'

[kubelet-start] Writing kubelet configuration to file "/var/lib/kubelet/config.yaml"

[kubelet-start] Writing kubelet environment file with flags to file "/var/lib/kubelet/kubeadm-flags.env"

[kubelet-start] Starting the kubelet

[kubelet-check] Waiting for a healthy kubelet. This can take up to 4m0s

[kubelet-check] The kubelet is healthy after 502.139241ms

[kubelet-start] Waiting for the kubelet to perform the TLS Bootstrap

This node has joined the cluster:

* Certificate signing request was sent to apiserver and a response was received.

* The Kubelet was informed of the new secure connection details.

Run 'kubectl get nodes' on the control-plane to see this node join the cluster.

[root@node1 ~]#三十二、如果出错,要把证书删掉

[root@node2 ~]# cd /etc/kubernetes/pki

[root@node2 pki]# rm ca.crt

rm: remove regular file 'ca.crt'? y

[root@node2 pki]# cd三十三、将node2加入到集群中,忽略警告信息

[root@node2 ~]# kubeadm join 192.168.7.199:16443 --token abcdef.0123456789abcdef --discovery-token-ca-cert-hash sha256:c5ee15c87f3a826b7a411bd21009b9f43b48b3ea8443196888482e5eca0d5e30 --ignore-preflight-errors=SystemVerification

[preflight] Running pre-flight checks

[preflight] Reading configuration from the cluster...

[preflight] FYI: You can look at this config file with 'kubectl -n kube-system get cm kubeadm-config -o yaml'

[kubelet-start] Writing kubelet configuration to file "/var/lib/kubelet/config.yaml"

[kubelet-start] Writing kubelet environment file with flags to file "/var/lib/kubelet/kubeadm-flags.env"

[kubelet-start] Starting the kubelet

[kubelet-check] Waiting for a healthy kubelet. This can take up to 4m0s

[kubelet-check] The kubelet is healthy after 1.004242791s

[kubelet-start] Waiting for the kubelet to perform the TLS Bootstrap

This node has joined the cluster:

* Certificate signing request was sent to apiserver and a response was received.

* The Kubelet was informed of the new secure connection details.

Run 'kubectl get nodes' on the control-plane to see this node join the cluster.

[root@node2 ~]#三十四、查看节点信息

[root@master1 ~]# kubectl get nodes 查看节点信息

NAME STATUS ROLES角色 AGE时间 VERSION

master1 NotReady control-plane 62m v1.30.0

master2 NotReady control-plane 59m v1.30.0

master3 NotReady control-plane 45m v1.30.0

node1 NotReady <none> 无 4m55s v1.30.0

node2 NotReady <none> 57s v1.30.0

[root@master1 ~]#三十五、将node1、node2节点打work的标签

[root@master1 ~]# kubectl label nodes node1 node-role.kubernetes.io/work=work 将node1节点打work的标签

node/node1 labeled

[root@master1 ~]# kubectl label nodes node2 node-role.kubernetes.io/work=work 将node2节点打work的标签

node/node2 labeled

[root@master1 ~]#[root@master1 ~]# kubectl get nodes 查看节点信息

NAME STATUS ROLES角色 AGE时间 VERSION

master1 NotReady control-plane 68m v1.30.0

master2 NotReady control-plane 65m v1.30.0

master3 NotReady control-plane 51m v1.30.0

node1 NotReady work 加入工作节点 10m v1.30.0

node2 NotReady work 6m50s v1.30.0

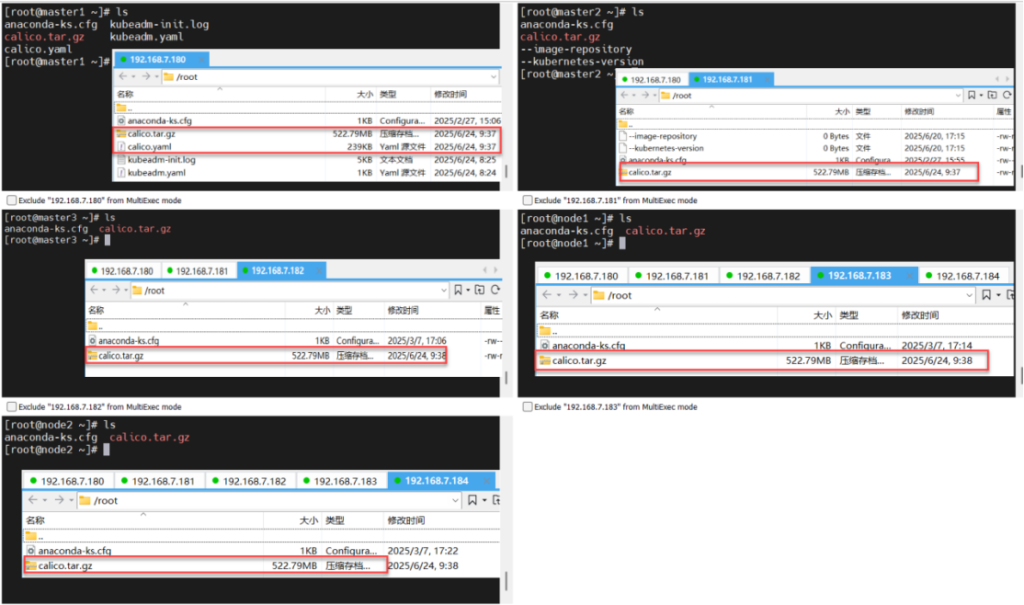

[root@master1 ~]#三十六、节点1-5上传calico压缩包

[root@1 ~]# ls

anaconda-ks.cfg kubeadm-init.log

calico.tar.gz kubeadm.yaml

calico.yaml

[root@master1 ~]#

[root@2-5 ~]# ls

anaconda-ks.cfg

calico.tar.gz

--image-repository

--kubernetes-version

[root@2-5 ~]#三十七、所有节点导入caclio的镜像

[root@1-5 ~]# ctr -n k8s.io images import calico.tar.gz

unpacking docker.io/calico/cni:v3.26.1 (sha256:dbdd8749b4d394abf14b528fbc5a41d64953c7e4d08f1a2133e9300affe0e98)...done

unpacking docker.io/calico/node:v3.26.1 (sha256:86644b66a7ba300e287656ad141e5095ffd1a282648d80a01d29c46c6c4870c)...done

unpacking docker.io/calico/kube-controllers:v3.26.1 (sha256:0157cc18893d4ebbfbbec451cc022bfcc832f3c872f9fcd5534bb45586ac307)...done

unpacking docker.io/calico/pod2daemon-flexvol:v3.26.1 (sha256:b21921f913c9e438a58e06f9fbc4c8ee148278fe785d2324fb0b15ff65c74d2)...done

[root@1-5 ~]#三十八、master1上编辑calico的配置文件

[root@master1 ~]# vim calico.yaml 编辑calico的配置文件(yaml是k8s配置文件的后缀名称)

4768 value: "interface=ens160" 将网卡接口更改为ens160三十九、应用calico的配置文件

[root@master1 ~]# kubectl apply -f calico.yaml 应用calico的配置文件[root@master1 ~]# kubectl get nodes 查看节点信息

NAME STATUS ROLES AGE VERSION

master1 Ready control-plane 99m v1.30.0

master2 Ready control-plane 97m v1.30.0

master3 Ready control-plane 83m v1.30.0

node1 Ready work角色:工作节点 42m v1.30.0

node2 Ready work角色:工作节点 38m v1.30.0

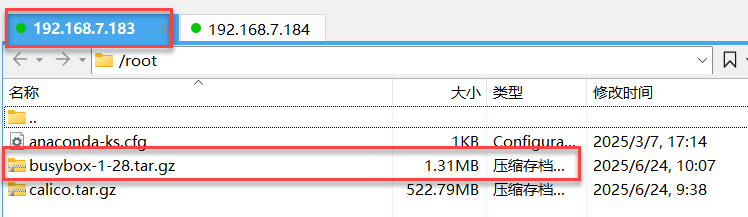

[root@master1 ~]#四十、上传busybox1.28到node1\node2上

[root@node1 ~]# ls

anaconda-ks.cfg busybox-1-28.tar.gz calico.tar.gz

[root@node1 ~]#

[root@node2 ~]# ls

anaconda-ks.cfg busybox-1-28.tar.gz calico.tar.gz

[root@node2 ~]#[root@node1 ~]# ctr -n k8s.io images import busybox-1-28.tar.gz #node1导入网络容器的镜像(calico.tar.gz网络插件的镜像)

unpacking docker.io/library/busybox:1.28 (sha256:585093da3a716161ec2b2595011051a 90d2f089bc2a25b4a34a18e2cf542527c)...done

[root@node1 ~]#[root@node2 ~]# ctr -n k8s.io images import busybox-1-28.tar.gz #node2导入网络容器的镜像(calico.tar.gz网络插件的镜像)

unpacking docker.io/library/busybox:1.28 (sha256:585093da3a716161ec2b2595011051a 90d2f089bc2a25b4a34a18e2cf542527c)...done四十一、k8s运行busybox镜像,并生成busybox容器,并进入busybox容器

<!-- wp:code -->

<pre class="wp-block-code"><code>[root@master1 ~]# kubectl run busybox --image docker.io/library/busybox:1.28 --image-pull-policy=IfNotPresent --restart=Never --rm -it busybox -- sh #k8s运行busybox镜像,并生成busybox容器,并进入busybox容器

If you don't see a command prompt, try pressing enter.

/ #

/ # ping www.baidu.com

PING www.baidu.com (110.242.69.21): 56 data bytes

64 bytes from 110.242.69.21: seq=0 ttl=127 time=20.140 ms

64 bytes from 110.242.69.21: seq=1 ttl=127 time=13.469 ms

64 bytes from 110.242.69.21: seq=2 ttl=127 time=14.431 ms

^C

--- www.baidu.com ping statistics ---

3 packets transmitted, 3 packets received, 0% packet loss

round-trip min/avg/max = 13.469/16.013/20.140 ms

/ # ping 110.242.69.21

PING 110.242.69.21 (110.242.69.21): 56 data bytes

64 bytes from 110.242.69.21: seq=0 ttl=127 time=38.787 ms

64 bytes from 110.242.69.21: seq=1 ttl=127 time=14.117 ms

64 bytes from 110.242.69.21: seq=2 ttl=127 time=14.150 ms

64 bytes from 110.242.69.21: seq=3 ttl=127 time=14.574 ms

^C

--- 110.242.69.21 ping statistics ---

4 packets transmitted, 4 packets received, 0% packet loss

round-trip min/avg/max = 14.117/20.407/38.787 ms

/ # nslookup kubernetes.default.svc.cluster.local

Server: 10.96.0.10

Address 1: 10.96.0.10 kube-dns.kube-system.svc.cluster.local

Name: kubernetes.default.svc.cluster.local

Address 1: 10.96.0.1 kubernetes.default.svc.cluster.local

/ # exit

pod "busybox" deleted

[root@master1 ~]#</code></pre>

<!-- /wp:code -->

四十二、成果:Master1\2\3,node1\2拍摄快照。快照名称:高可用的k8s集群搭建完成