知识点:

我们先提到了K8s的设计是,pv交给存储管理员来管理,我们是管用pvc来消费就好,但这里我们实际还是得一起管理pv和pvc,在实际工作中,我们(存储管理员)可以提前配置好pv的动态供给StorageClass,来根据pvc的消费动态生成pv

一、实验环境:

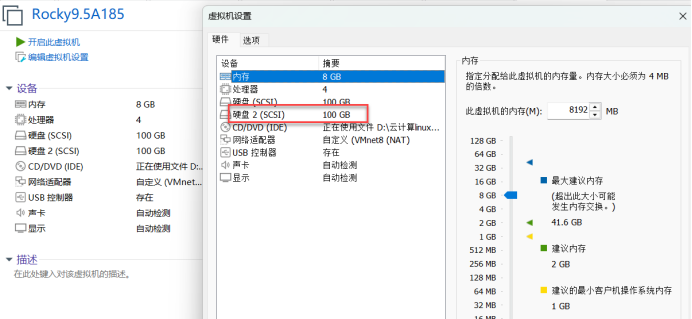

master1、node1\node2还原至单节点集群,185直接开机。

Nfs7.185还原至k8s新系统,添加一块新的100G硬盘;

建存储需要单独一块儿磁盘,不能和系统盘sda混在一起

二、新建磁盘、挂载磁盘

[root@nfs ~]# fdisk -l

Disk /dev/sda: 100 GiB, 107374182400 bytes, 209715200 sectors

Disk model: VMware Virtual S

Units: sectors of 1 * 512 = 512 bytes

Sector size (logical/physical): 512 bytes / 512 bytes

I/O size (minimum/optimal): 512 bytes / 512 bytes

Disklabel type: dos

Disk identifier: 0x1f9c691f

Device Boot Start End Sectors Size Id Type

/dev/sda1 2048 6143 4096 2M 83 Linux

/dev/sda2 * 6144 1030143 1024000 500M 83 Linux

/dev/sda3 1030144 209715199 208685056 99.5G 8e Linux LVM

Disk /dev/sdb: 100 GiB, 107374182400 bytes, 209715200 sectors

Disk model: VMware Virtual S

Units: sectors of 1 * 512 = 512 bytes

Sector size (logical/physical): 512 bytes / 512 bytes

I/O size (minimum/optimal): 512 bytes / 512 bytes

Disk /dev/mapper/rl-root: 91.51 GiB, 98255765504 bytes, 191905792 sectors

Units: sectors of 1 * 512 = 512 bytes

Sector size (logical/physical): 512 bytes / 512 bytes

I/O size (minimum/optimal): 512 bytes / 512 bytes

Disk /dev/mapper/rl-swap: 8 GiB, 8589934592 bytes, 16777216 sectors

Units: sectors of 1 * 512 = 512 bytes

Sector size (logical/physical): 512 bytes / 512 bytes

I/O size (minimum/optimal): 512 bytes / 512 bytes

[root@nfs ~]# gdisk /dev/sdb

GPT fdisk (gdisk) version 1.0.7

Partition table scan:

MBR: not present

BSD: not present

APM: not present

GPT: not present

Creating new GPT entries in memory.

Command (? for help): n

Partition number (1-128, default 1): 1

First sector (34-209715166, default = 2048) or {+-}size{KMGTP}:

Last sector (2048-209715166, default = 209715166) or {+-}size{KMGTP}:

Current type is 8300 (Linux filesystem)

Hex code or GUID (L to show codes, Enter = 8300):

Changed type of partition to 'Linux filesystem'

Command (? for help): w

Final checks complete. About to write GPT data. THIS WILL OVERWRITE EXISTING

PARTITIONS!!

Do you want to proceed? (Y/N): y

OK; writing new GUID partition table (GPT) to /dev/sdb.

The operation has completed successfully.

[root@nfs ~]# mkfs.xfs /dev/sdb1

meta-data=/dev/sdb1 isize=512 agcount=4, agsize=6553535 blks

= sectsz=512 attr=2, projid32bit=1

= crc=1 finobt=1, sparse=1, rmapbt=0

= reflink=1 bigtime=1 inobtcount=1 nrext64=0

data = bsize=4096 blocks=26214139, imaxpct=25

= sunit=0 swidth=0 blks

naming =version 2 bsize=4096 ascii-ci=0, ftype=1

log =internal log bsize=4096 blocks=16384, version=2

= sectsz=512 sunit=0 blks, lazy-count=1

realtime =none extsz=4096 blocks=0, rtextents=0

[root@nfs ~]# blkid

/dev/mapper/rl-swap: UUID="03fb4959-b91d-4d49-b0eb-422ae40092d3" TYPE="swap"

/dev/sdb1: UUID="4aff442f-df80-4c50-ac94-b369b7ef6901" TYPE="xfs" PARTLABEL="Linux filesystem" PARTUUID="02379db9-13c7-4cd0-84b3-0b43e4886070"

/dev/sr0: UUID="2024-11-16-01-52-31-00" LABEL="Rocky-9-5-x86_64-dvd" TYPE="iso9660" PTUUID="5d8 96d99" PTTYPE="dos"

/dev/mapper/rl-root: UUID="0088d07b-d503-4a80-9c3c-d5e990fc55df" TYPE="xfs"

/dev/sda2: UUID="5c3a0aa0-09e4-4cc3-9f1a-28664bf590d4" TYPE="xfs" PARTUUID="1f9c691f-02"

/dev/sda3: UUID="1bJJ7D-XYuc-WKJc-jtwo-TBgr-B4ws-lo4Jiu" TYPE="LVM2_member" PARTUUID="1f9c691f- 03"

/dev/sda1: PARTUUID="1f9c691f-01"

[root@nfs ~]# mkdir /nfs_dir

[root@nfs ~]#

[root@nfs ~]# vim /etc/fstab

UUID=4aff442f-df80-4c50-ac94-b369b7ef6901 /nfs_dir xfs defaults 0 0

[root@nfs ~]#

[root@nfs ~]# systemctl daemon-reload

[root@nfs ~]# mount -a

[root@nfs ~]# df -hT

Filesystem Type Size Used Avail Use% Mounted on

devtmpfs devtmpfs 4.0M 0 4.0M 0% /dev

tmpfs tmpfs 3.8G 0 3.8G 0% /dev/shm

tmpfs tmpfs 1.5G 9.1M 1.5G 1% /run

/dev/mapper/rl-root xfs 92G 3.8G 88G 5% /

/dev/sda2 xfs 436M 297M 140M 69% /boot

tmpfs tmpfs 766M 4.0K 766M 1% /run/user/0

/dev/sdb1 xfs 100G 746M 100G 1% /nfs_dir

[root@nfs ~]#三、安装nfs及启动Nfs

[root@nfs ~]# dnf -y install nfs-utils 安装nfs

[root@nfs ~]# systemctl enable --now nfs-server 启动Nfs并设置开机启动

Created symlink /etc/systemd/system/multi-user.target.wants/nfs-server.service → /usr/lib/systemd/system/nfs-server.service.四、编辑nfs的配置文件

[root@nfs ~]# vim /etc/exports 编辑nfs的配置文件

/nfs_dir *(rw,no_root_squash) #将nfs_dir的目录,发布到任何Ip,可写可读,以匿名用户执行,不能以root用户执行,可以赋予管理员权限[root@nfs ~]# exportfs -avr 将nfs发布出去(其他节点才能访问它)

exporting *:/nfs_dir

[root@nfs ~]#

[root@nfs ~]# showmount -e 查看nfs挂载项

Export list for nfs:

/nfs_dir *

[root@nfs ~]#五、删除pv1:

[root@nfs ~]# cd /nfs_dir/

[root@nfs nfs_dir]# ls

pv1

[root@nfs nfs_dir]# rm -rf pv1

[root@nfs nfs_dir]#

[root@nfs nfs_dir]# showmount -e

Export list for nfs:

/nfs_dir *

[root@nfs nfs_dir]# cd

[root@nfs ~]#

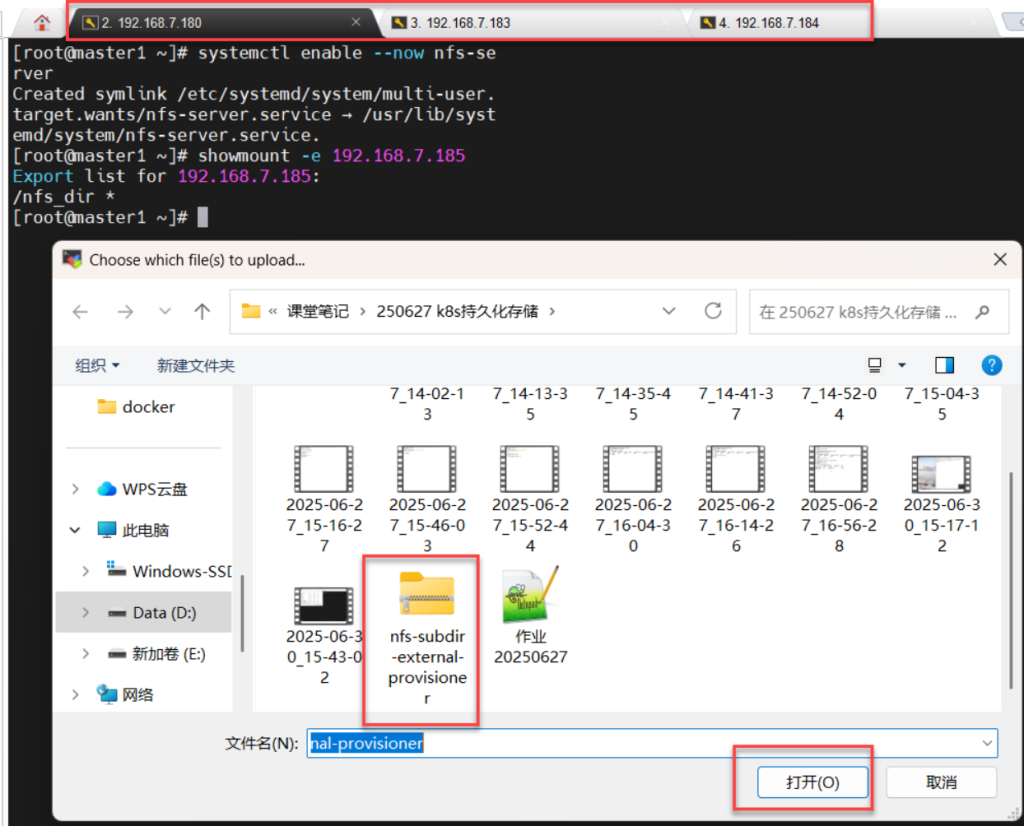

[root@nfs ~]#六、master1\node1\node2下安装及启动nfs:

[root@node1 ~]# dnf -y install nfs-utils

[root@master1、node1、node2 ~]# systemctl enable --now nfs-se rver

Created symlink /etc/systemd/system/multi-user. target.wants/nfs-server.service → /usr/lib/syst emd/system/nfs-server.service.七、查询 NFS: 192.168.7.185服务器上共享目录

[root@master1、node1、node2~]# showmount -e 192.168.7.185 查询 NFS: 192.168.7.185服务器上共享目录

Export list for 192.168.7.185:

/nfs_dir *

[root@master1、node1、node2 ~]#八、master1\node1\node2上传nfs-provisioner

[root@master1\node1\node2 ~]# ls

anaconda-ks.cfg

calico.tar.gz

calico.yaml

kubeadm.yaml

nfs-subdir-external-provisioner.tar 所有节点都需要导入nfs存储类的镜像文件

[root@master1\node1\node2 ~]# ctr -n k8s.io images import nfs-subdir-external-provisioner.tar 所有节点都需要导入nfs存储类的镜像文件

unpacking k8s.gcr.io/sig-storage/nfs-subdir-external-provisioner:v4.0.2 (sha256:925a999977a8fe14dd85d14aadd741144c7f8acfb2fe79f41c353bf26a0c58b7)...done

[root@master1 ~]#九、创建nfs存储类的配置文件

[root@master1 ~]# vim nfs-sc.yaml 创建nfs存储类的配置文件

apiVersion: v1

kind: ServiceAccount

metadata:

name: nfs-client-provisioner

namespace: kube-system

---

kind: ClusterRole

apiVersion: rbac.authorization.k8s.io/v1

metadata:

name: nfs-client-provisioner-runner

rules:

- apiGroups: [""]

resources: ["persistentvolumes"]

verbs: ["get", "list", "watch", "create", "delete"]

- apiGroups: [""]

resources: ["persistentvolumeclaims"]

verbs: ["get", "list", "watch", "update"]

- apiGroups: ["storage.k8s.io"]

resources: ["storageclasses"]

verbs: ["get", "list", "watch"]

- apiGroups: [""]

resources: ["events"]

verbs: ["list", "watch", "create", "update", "patch"]

- apiGroups: [""]

resources: ["endpoints"]

verbs: ["get", "list", "watch", "create", "update", "patch"] k8s的权限设置

---

kind: ClusterRoleBinding

apiVersion: rbac.authorization.k8s.io/v1

metadata:

name: run-nfs-client-provisioner

subjects:

- kind: ServiceAccount

name: nfs-client-provisioner

namespace: kube-system

roleRef:

kind: ClusterRole

name: nfs-client-provisioner-runner

apiGroup: rbac.authorization.k8s.io

---

kind: Deployment

apiVersion: apps/v1

metadata:

name: nfs-provisioner-01

namespace: kube-system

spec:

replicas: 1

strategy:

type: Recreate

selector:

matchLabels:

app: nfs-provisioner-01

template:

metadata:

labels:

app: nfs-provisioner-01

spec:

serviceAccountName: nfs-client-provisioner

containers:

- name: nfs-client-provisioner

image: k8s.gcr.io/sig-storage/nfs-subdir-external-provisioner:v4.0.2

imagePullPolicy: IfNotPresent

volumeMounts:

- name: nfs-client-root

mountPath: /persistentvolumes

env:

- name: PROVISIONER_NAME

value: nfs-provisioner-01 # 此处供应者名字供storageclass调用

- name: NFS_SERVER

value: 192.168.7.185 # 填入NFS的地址

- name: NFS_PATH

value: /nfs_dir # 填入NFS挂载的目录

volumes:

- name: nfs-client-root

nfs:

server: 192.168.7.185 # 填入NFS的地址

path: /nfs_dir # 填入NFS挂载的目录

---

# use aliyun's nas need configure: https://help.aliyun.com/document_detail/130727.html?spm=a2c4g.11174283.6.715.1aad2ceeUrijYZ

apiVersion: storage.k8s.io/v1

kind: StorageClass

metadata:

name: nfs-boge

provisioner: nfs-provisioner-01

# Supported policies: Delete、 Retain , default is Delete

reclaimPolicy: Retain十、master1上应用nfs存储类的配置文件

[root@master1 ~]# kubectl apply -f nfs-sc.yaml 应用nfs存储类的配置文件 (删除的话:kubectl delete -f nfs-sc.yaml)

serviceaccount/nfs-client-provisioner created

clusterrole.rbac.authorization.k8s.io/nfs-client-provisioner-runner created

clusterrolebinding.rbac.authorization.k8s.io/run-nfs-client-provisioner created

deployment.apps/nfs-provisioner-01 created

storageclass.storage.k8s.io/nfs-boge created

[root@master1 ~]#十一、查看命名空间kube-system pod的动态信息

[root@master1 ~]# kubectl -n kube-system get pod -w 查看命名空间kube-system pod的动态信息

NAME READY STATUS RESTARTS AGE

calico-kube-controllers-7dc5458bc6-5jztl 1/1 Running 2 (44m ago) 10d

calico-node-clzfg 1/1 Running 2 (44m ago) 10d

calico-node-kbqjt 1/1 Running 2 (44m ago) 10d

calico-node-ng6b2 1/1 Running 2 (44m ago) 10d

coredns-7c445c467-kff9r 1/1 Running 2 (44m ago) 10d

coredns-7c445c467-mmcg7 1/1 Running 2 (44m ago) 10d

etcd-master1 1/1 Running 2 (44m ago) 10d

kube-apiserver-master1 1/1 Running 2 (44m ago) 10d

kube-controller-manager-master1 1/1 Running 2 (44m ago) 10d

kube-proxy-6kdb8 1/1 Running 2 (44m ago) 10d

kube-proxy-gq9js 1/1 Running 2 (44m ago) 10d

kube-proxy-vjm2p 1/1 Running 2 (44m ago) 10d

kube-scheduler-master1 1/1 Running 2 (44m ago) 10d

nfs-provisioner-01-677fb49774-l2jc2 1/1 Running 0 44s 看这个

^C[root@master1 ~]#

[root@master1 ~]#(创建存储类后,创建pvc,这个不用创建pv)十二、master1上创建pvc存储类的配置文件

[root@master1 ~]# vim pvc-sc.yaml 创建pvc存储类的配置文件

kind: PersistentVolumeClaim 类型:持久卷消费简称:pvc

apiVersion: v1 api版本:v1

metadata: 元数据:pvc-sc

name: pvc-sc

spec: 定义资源对象

storageClassName: nfs-boge 定义存储类的名称:nfs-boge

accessModes: 访问模式:允许多节点挂载权限读写

- ReadWriteMany

resources: 资源

requests: 请求

storage: 5Gi 存储容量5G十三、进入/nfs_dir/目录下查看:

[root@nfs ~]# cd /nfs_dir/

[root@nfs nfs_dir]# ll

total 0

drwxrwxrwx 2 root root 6 Jun 30 16:04 default-pvc-sc-pvc-7e22642b-f2d5-449b-8bc1-6cd5a817e83f

[root@nfs nfs_dir]# 十四、master1上编辑nginx的配置文件:

[root@master1 ~]# vim nginx.yaml

# cat nginx.yaml

apiVersion: v1

kind: Service

metadata:

labels:

app: nginx

name: nginx

spec:

ports:

- port: 80

protocol: TCP

targetPort: 80

selector:

app: nginx app nginx

---

apiVersion: apps/v1

kind: Deployment

metadata:

labels:

app: nginx

name: nginx

spec:

replicas: 1

selector:

matchLabels:

app: nginx

template:

metadata:

labels:

app: nginx

spec:

containers:

- image: nginx:1.21.6

name: nginx

volumeMounts: # 我们这里将nginx容器默认的页面目录挂载

- name: html-files

mountPath: "/usr/share/nginx/html"

volumes:

- name: html-files

persistentVolumeClaim:

clai将pod存储放到nfs上:

claimName: pvc-sc十五、Node1/node2上传nginxmName: pv1

[root@master1 ~]# kubectl get pod

NAME READY STATUS RESTARTS AGE

nginx-6b687c9b78-v9hrk 0/1 Pending 0 67s

[root@master1 ~]# kubectl get pod -o wide

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

nginx-6b687c9b78-v9hrk 0/1 Pending 0 80s <none> <none> <none> <none>

[root@master1 ~]#[root@node1 ~]# ctr -n k8s.io images import nginx-1.21.6.tar node1\node2挂载nginx镜像

unpacking docker.io/library/nginx:1.21.6 (sha256:94b808e393739b5363decf631a746d0241083d40eb05f07200a6d1c0c16f54b8)...done

[root@node1 ~]#

[root@node2 ~]# ctr -n k8s.io images import nginx-1.21.6.tar

unpacking docker.io/library/nginx:1.21.6 (sha256:94b808e393739b5363decf631a746d0241083d40eb05f07200a6d1c 0c16f54b8)...done

[root@node2 ~]#十六、删除nginx的配置文件:

[root@master1 ~]# kubectl delete -f nginx.yaml

service "nginx" deleted

deployment.apps "nginx" deleted

[root@master1 ~]# kubectl apply -f nginx.yaml 应用pvc-sc的配置文件

service/nginx created

deployment.apps/nginx created

[root@master1 ~]# kubectl exec -it nginx-6b687c9b78-v9hrk -- bash

Error from server (NotFound): pods "nginx-6b687c9b78-v9hrk" not found

[root@master1 ~]# kubectl get pod

NAME READY STATUS RESTARTS AGE

nginx-8444fcb447-8p9vz 0/1 ContainerCreating 容器正在创建(nginx镜像未导入或未运行) 0 19s

[root@master1 ~]# kubectl get pod -o wide

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

nginx-8444fcb447-8p9vz 0/1 ErrImagePull 0 34s 10.244.166.129 node1 <none> <none>

[root@master1 ~]# kubectl get pod

NAME READY STATUS RESTARTS AGE

nginx-8444fcb447-8p9vz 1/1 Running 0 3m43s

[root@master1 ~]# kubectl get pod -o wide

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

nginx-8444fcb447-8p9vz 1/1 Running 0 3m46s 10.244.166.129 node1 <none> <none>

[root@master1 ~]#十七、进入Nginx的容器里:查看pvc;nginx集群IP

[root@master1 ~]# kubectl exec -it nginx-8444fcb447-8p9vz -- bash 进入Nginx的容器里

root@nginx-8444fcb447-8p9vz:/#

root@nginx-8444fcb447-8p9vz:/# echo "StorageClass userd" > /usr/share/nginx/html/index.ht ml

root@nginx-8444fcb447-8p9vz:/# exit

exit

[root@master1 ~]# kubectl get svc 查看pvc

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

kubernetes ClusterIP 10.96.0.1 <none> 443/TCP 10d

nginx ClusterIP 10.96.3.227 <none> 80/TCP 10m

[root@master1 ~]#

[root@master1 ~]#

[root@master1 ~]# curl 10.96.3.227 nginx集群IP

StorageClass userd

[root@master1 ~]#十八、访问集群IP、在nfs服务器里查看nginx的测试页面

[root@nfs nfs_dir]# cat default-pvc-sc-pvc-7e22642b-f2d5-449b-8bc1-6cd5a817e83f/index.html 访问集群IP

StorageClass userd 在nfs服务器里查看nginx的测试页面

[root@nfs nfs_dir]#